Engineering teams in 2026 work in a different operating environment than they did even two years ago. AI-assisted development has changed how code gets written and reviewed. Distributed teams are normal. Leadership expects a cleaner line between engineering work and business results.

That shift breaks many old habits. Tickets closed, hours logged, and lines of code never said much about value, though teams kept using them because they were easy to count. Good measurement now needs a wider view. Flow matters. Quality matters. Business impact matters. Team sustainability matters, too.

Why old output metrics break down

A team can close 40 tickets in a sprint and still leave customers with a slow product, a fragile release, and a burned-out review queue. The numbers look busy. The system does not improve.

The problem is not measurement itself. The problem is weak measurement. Good engineering team metrics show whether work moves cleanly, lands safely, changes user or business outcomes, and leaves the team in decent shape for the next quarter. That is a better lens than counting visible activity.

A practical framework

The cleanest way to think about this is through four categories:

- Flow: How does work move from idea to production?

- Quality and reliability: How often do work breaks, regressions, or cause recovery efforts?

- Business impact: What changed after release?

- Team health and sustainability: Whether the team keeps performing without piling up friction or fatigue.

Most software engineering team metrics worth tracking fall into one of those four buckets. The same logic applies to most engineering team performance metrics, even when the stack, domain, or team shape changes.

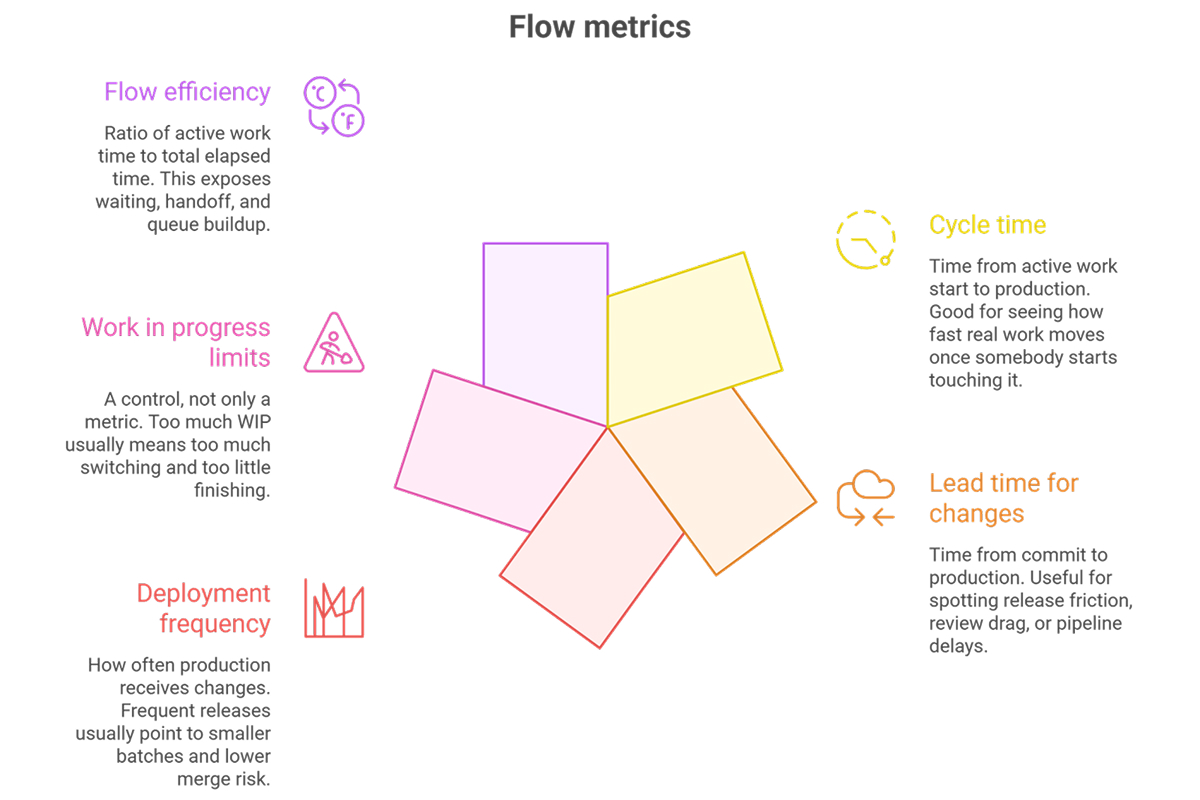

1. Flow metrics

Flow metrics answer a simple question. How hard is it for work to move through your system?

A lot of delivery pain hides in queues. Work waits for review. Pull requests grow too large. QA becomes a bottleneck. Releases pile up behind one staff engineer who knows the deployment process better than everyone else.

Useful flow metrics include:

- Cycle time: Time from active work start to production. Good for seeing how fast real work moves once somebody starts touching it.

- Lead time for changes: Time from commit to production. Useful for spotting release friction, review drag, or pipeline delays.

- Deployment frequency: How often production receives changes. Frequent releases usually point to smaller batches and lower merge risk.

- Work in progress limits: A control, not only a metric. Too much WIP usually means too much switching and too little finishing.

- Flow efficiency: Ratio of active work time to total elapsed time. This exposes waiting, handoff, and queue buildup.

Cycle time is often where teams first see the truth. A feature estimated at three days takes twelve. Not because coding took twelve days, but because work waited in review for two, sat in QA for three, and missed a release train. Once that pattern becomes visible, the conversation changes. Teams stop arguing about individual effort and start fixing the system.

A useful habit here is slicing by work type. Bug fixes, customer-facing features, platform work, and migration tasks behave differently. One blended median hides too much.

2. Quality and reliability metrics

Speed without quality does not stay fast for long. Teams that ship unstable work usually pay for it in later sprints, during incidents, and when roadmap work slips because time is spent cleaning up rushed releases. Reliability metrics matter because they connect delivery speed to production reality.

Useful quality and reliability metrics include:

- Change failure rate: Percentage of deployments that trigger rollback, hotfix, incident, or customer-visible defect.

- Defect escape rate: Defects found after release compared with defects caught earlier.

- Incident frequency: How often production failures interrupt normal service.

- Mean time to recovery: Time from failure detection to restored service.

- Test coverage and stability: Broad coverage helps, though flaky tests often do more damage than low coverage percentages.

A mature team does not chase a perfect change failure rate on its own. That often creates fear-driven release behavior, where teams batch changes, delay deploys, or avoid necessary risk. Trend analysis is more useful. If deployment frequency rises while change failure rate stays stable, that is healthy. If deployments stay fast but recovery time keeps increasing, resilience work is overdue.

3. Business impact metrics

This is the category engineering teams often skip because the signal feels less direct. A pull request has a clear timestamp. Feature adoption or revenue impact is harder to measure. That does not make business outcome optional.

If leaders only review flow and reliability, they learn whether delivery is efficient, not whether it matters. A team can build the wrong thing efficiently for months.

Useful business impact metrics include:

- Feature adoption: Usage depth, repeat usage, and retention after launch.

- Revenue influence: Direct conversion lift, expansion support, or reduction in lost deals tied to delivery work.

- Customer retention impact: Churn reduction tied to reliability, performance, or workflow fixes.

- Performance improvements: Faster page loads, shorter job runtimes, lower query latency, fewer retries.

- Cost efficiency gains: Lower infrastructure spend, fewer failed jobs, leaner compute usage, fewer support hours.

This does not mean every team needs a dollar figure on every story, because real systems are rarely that tidy. Still, quarterly planning should create a clear link between technical work and the outcome. A platform team might track deployment lead time, a backend team might measure failed checkout retries, and a data platform team might follow analyst wait time. The main point is simple. Engineering output alone is not the finish line. What matters is the effect that work has on users and the business.

4. Team health and sustainability metrics

Teams do not stay effective by accident. They stay effective when the working environment protects focus, keeps review loops short, spreads knowledge, and avoids chronic overload.

Useful team health metrics include:

- Workload balance: Whether critical work keeps landing on the same people or bouncing between teams unevenly.

- Context switching frequency: How often engineers jump across repos, services, incidents, and unrelated ticket streams.

- Review turnaround time: Time for the first review and time to merge after the review starts.

- Focus time protection: Amount of meeting-free time available for real engineering work.

- Engagement or burnout indicators: Survey signals, sustained after-hours load, rising incident fatigue, or steady review debt.

These metrics should stay at the team level. Once leaders start using them as individual ranking tools, data quality collapses. People adapt fast. Reviews become shallow but quick. Meetings disappear from calendars while chat interruptions go up. Nobody wins.

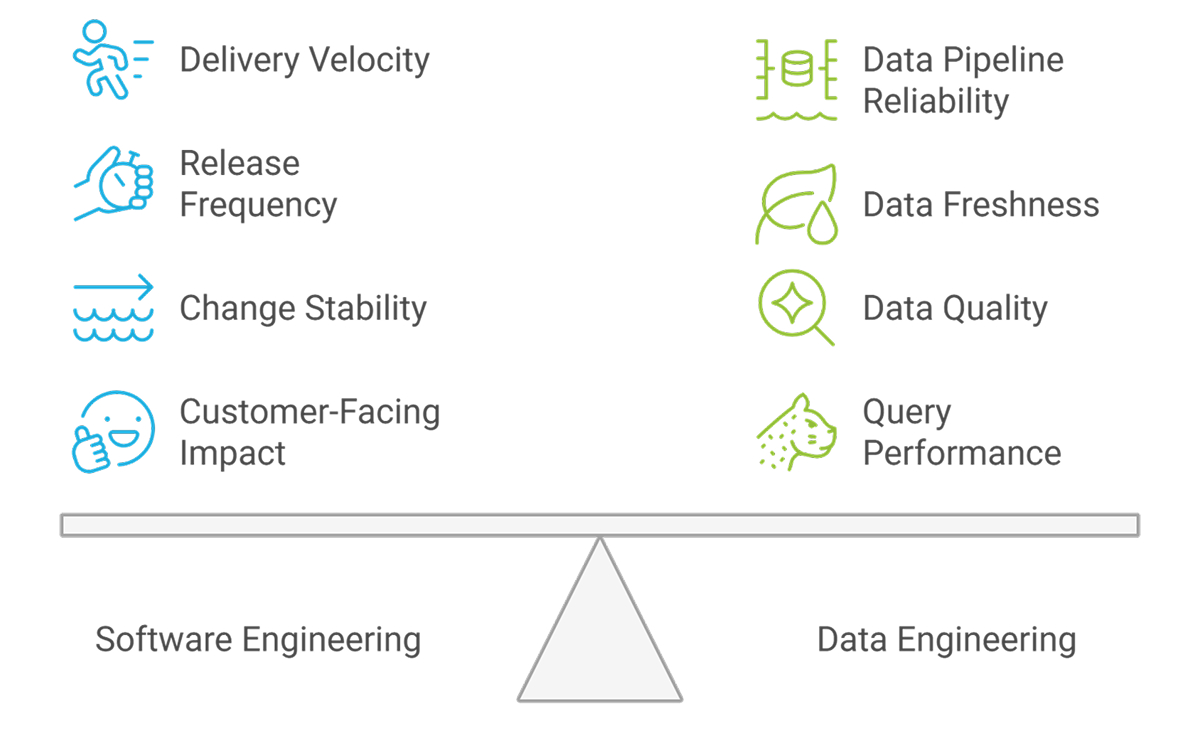

Software vs. Data Engineering Team Metrics

The phrase “data engineering team metrics” often gets lumped in with standard delivery dashboards. That usually leads to the wrong conclusions. Application teams and data teams share some signals, though the work shape is not the same.

Software engineering teams often care most about:

- Delivery velocity: How reliably features move from backlog to release.

- Release frequency: How often teams ship value into production.

- Change stability: Whether new releases create rollback or incident risk.

- Customer-facing feature impact: Whether shipped work improves usage, conversion, retention, or satisfaction.

Application teams live closer to the user surface. Feature speed, release quality, and production reliability usually dominate.

Data engineering teams usually care more about:

- Data pipeline reliability: Whether jobs run on schedule without manual rescue.

- Data freshness and latency: How long downstream users wait for trustworthy data.

- Data quality: Accuracy, completeness, consistency, and schema stability.

- Query performance: Whether analysts, services, and models get data fast enough for the job.

- SLA adherence: Whether the platform promises to internal consumers hold over time.

A product squad missing a weekly release is painful. A data platform missing freshness targets during finance close or model retraining is a different kind of pain. The metric system should align with the team’s actual responsibilities, not a generic template.

Key metrics for remote engineering teams

Remote work does not need activity monitoring. Remote work needs friction monitoring.

The key metrics for remote engineering teams are the ones that expose coordination drag without punishing autonomy. Review time matters more when half the team sleeps during the other half’s workday. Documentation quality matters more when hallway clarification is no longer available. Ownership clarity matters more when nobody sits near the person who built the service two years ago.

Useful remote signals include:

- Async collaboration efficiency: Pull request review time, comment response latency, and decision turnaround.

- Documentation completeness and discoverability: Whether runbooks, service docs, and design records exist and are easy to find.

- Cross-time-zone handoff delay: Idle time between one region finishing and the next region picking up work.

- Meeting load versus deep work time: Whether coordination overhead is crowding out delivery.

- Knowledge silos and ownership clarity: Whether support, release, and incident response depend on too few people.

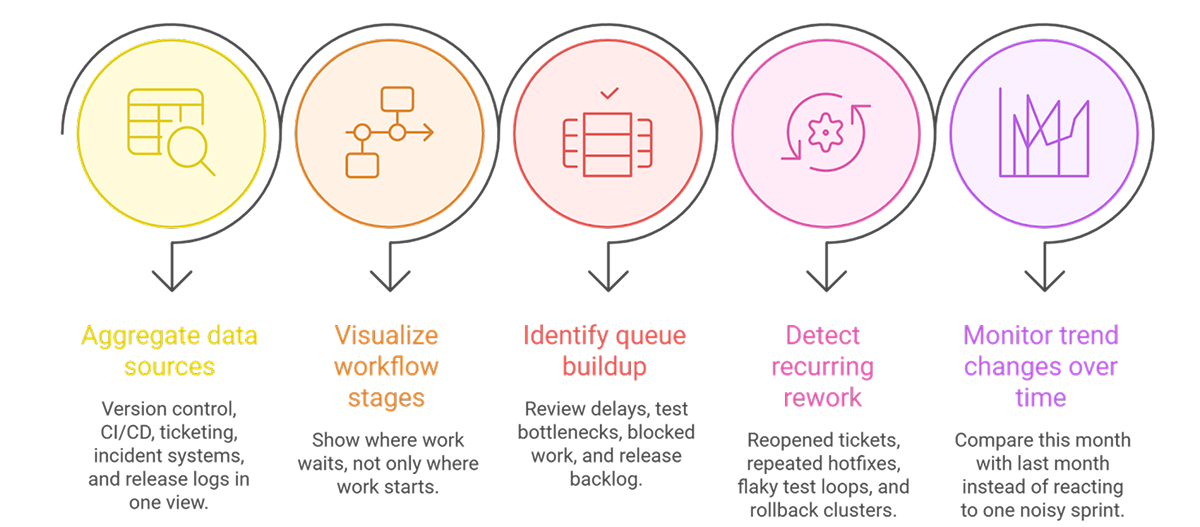

Using tools without turning them into surveillance

Tools matter because raw system data is scattered across multiple systems. Commit history sits in version control. Build results sit in CI. Incident data sits somewhere else. Ticket state changes live in the tracker. Without aggregation, teams end up reading fragments.

Good tools help teams do five things:

The danger starts when a dashboard becomes a compliance tool. Teams stop trusting the numbers. Leaders start reading movement as intent. The best tooling supports diagnosis, retrospectives, and roadmap adjustment. Surveillance ruins all three.

Roadmap alignment and common mistakes

Metrics only help when tied to planning and review cycles. A team running a quarterly reliability objective should not wait six months to decide whether the work helped. At the same time, changing the whole dashboard every two weeks creates noise.

A practical rhythm looks like this:

- Quarterly alignment: Set a small set of outcome metrics tied to roadmap priorities.

- Monthly review: Check trends, adjust focus, and catch drift early.

- Retro and leadership sync use: Review the same signals in both places so local pain connects with portfolio decisions.

Common mistakes show up fast:

- Vanity metrics: Commit count, lines of code, raw ticket volume.

- Individual ranking from team data: Fast path to distorted behavior and bad trust.

- Single-metric optimization: Faster deploys with worse recovery, more features with worse adoption.

- Activity measurement instead of impact measurement: Busy systems that do not improve outcomes.

The cleanest metric systems stay small. Teams do not need thirty charts. They need a handful of signals that match current priorities and a habit of reading them honestly.

Final Thoughts

The best engineering metrics systems in 2026 are not bigger. They are more focused, more practical, and more closely tied to how teams actually create value. They help teams move work with less friction, ship with less risk, connect engineering effort to meaningful business outcomes, and protect the conditions that support steady, sustainable performance over time. Good metrics do not just describe activity. They help teams see what needs attention, what is improving, and where work is creating real results.

FAQs

1. What are the most important engineering team metrics to track in 2026?

Start with flow, reliability, business outcome, and team health. A practical baseline includes cycle time, deployment frequency, change failure rate, mean time to recovery, feature adoption, and review turnaround time. Those cover delivery speed, release safety, value, and sustainability without drifting into vanity tracking.

2. How do engineering metrics tools help identify bottlenecks in development processes?

They pull signals from source control, CI/CD, ticketing, and incident systems into one view. That makes waiting visible. Review queues, flaky test loops, blocked tickets, and release backlogs stop hiding inside separate tools. Trend views also help teams distinguish between a bad week and a real system problem.

3. What’s the difference between metrics for teams and metrics about teams?

Metrics for teams support decisions about work. Metrics about teams often turn into observation without action. Cycle time, failure rate, and handoff delay help improve the system. Hours online or message count mostly describe visible activity and often push teams toward shallow, defensive behavior.

4. What tools do engineering managers use to track team health effectively?

Managers usually combine engineering intelligence tools, ticketing data, review analytics, incident records, calendar patterns, and short pulse surveys. No single dashboard tells the whole story. The useful setup mixes workflow data with signs of overload, review debt, meeting pressure, and repeated interrupt-driven work.

5. Should engineering roadmaps be updated monthly or quarterly?

Quarterly planning still works best for direction. Monthly review works better for correction. Most teams need both. Set outcome targets by quarter, then inspect trends each month and adjust scope, sequencing, or support work when signals show drift, risk, or a mismatch between planned value and delivery reality.