Abstract

AI-assisted and agentic development models are changing the structure of engineering work. As code generation, refactoring, and cross-system orchestration increasingly shift from direct human authorship to AI-mediated workflows, traditional productivity metrics lose interpretive reliability. Throughput can rise while review depth thins, rework concentrates, and governance capacity drifts out of alignment with delivery velocity. Adoption metrics, meanwhile, signal tool usage rather than value creation.

This paper examines the emerging assessment problem facing engineering leadership: how to measure performance when output-centric proxies no longer map cleanly to understanding, stability, or risk. Drawing on the investigative governance framework introduced in Managing Software Quality and Risk in the Era of AI-Assisted Development, we argue that engineering performance must be reframed as controlled acceleration: the ability to increase delivery velocity without degrading stability or governance integrity.

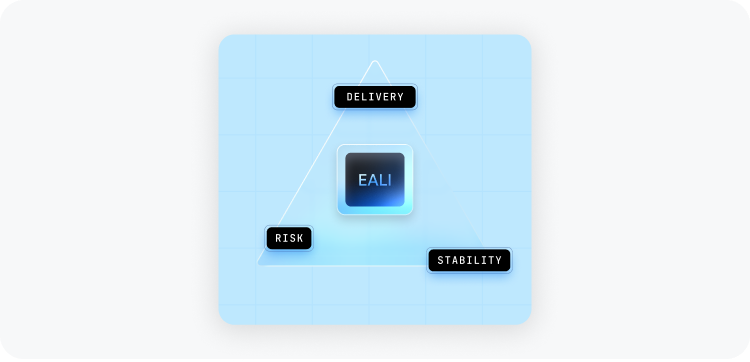

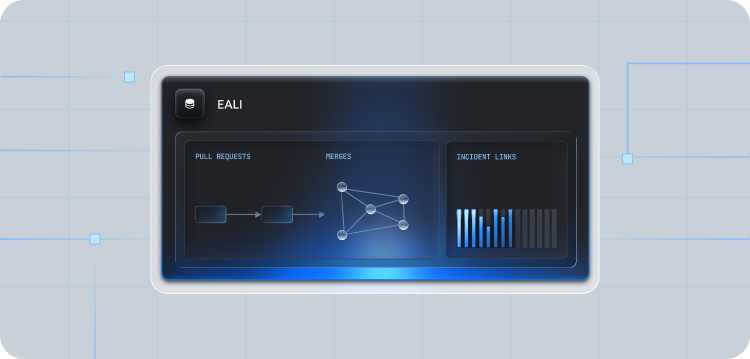

We introduce the Engineer Automation Leverage Index (EALI), a composite framework that formalizes leverage across three dimensions: delivery acceleration, stability under change, and risk discipline across control surfaces. Grounded in observable repository and workflow telemetry and requiring no prompt-level surveillance, EALI serves as a north star index for agentic engineering; restoring interpretability to performance assessment as development shifts from human-centric implementation to spec-led and AI-mediated orchestration.

1. The Assessment Problem in the Agentic Era

In our whitepaper ‘Managing Software Quality and Risk in the Era of AI-Assisted Development’, we established that AI-assisted development alters the control surface of software delivery. It shifts where decisions are made, where evidence is produced, and where oversight must be applied.

This paper focuses on a related but distinct problem: measurement.

For decades, software organisations relied on output-centric proxies:

- Code volume

- Pull request throughput

- Cycle time

- Defect rates

These signals worked because authorship, understanding, and accountability were tightly coupled. Engineers wrote code directly, accumulated system intuition through immersion, and bore traceable responsibility for their changes. Cognitive effort was required evenly across the task set.

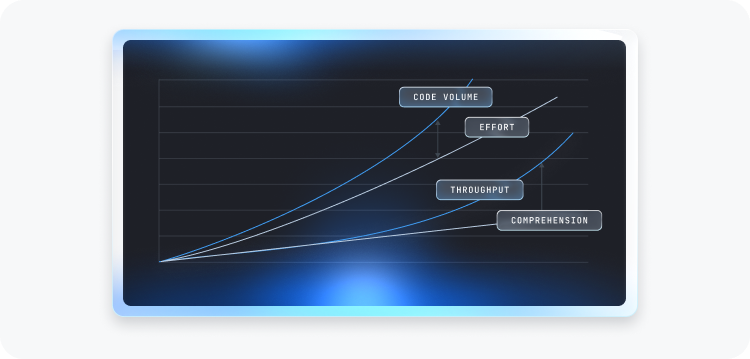

As development shifts along the spectrum from assistive to generative to orchestrated and agentic workflows, this coupling weakens as effort redistributes.

- Code volume decouples from effort.

- Throughput decouples from comprehension.

- Review artifacts persist while their meaning shifts.

This amounts to a form of metric drift: familiar indicators retain their surface form while their interpretive content changes.

The assessment challenge is not that existing metrics are incorrect. It is that they increasingly measure different things than leadership assumes.

2. From Activity to Leverage

In AI-augmented development environments, activity increases almost by definition. Code generation accelerates. Pull request volume rises. Refactoring cycles shorten. Changes propagate more quickly across repositories and services.

At first glance, this appears unambiguously positive. Many organisations experience measurable gains in throughput and cycle time within weeks of AI adoption.

However, increased activity does not necessarily imply increased leverage.

Leverage, in this context, is not raw speed. It is acceleration that remains stable and governable. It reflects an organisation’s ability to convert automation into durable progress without amplifying fragility or eroding control.

As development shifts toward spec-led and agentic workflows, three recurring distortions emerge.

Velocity can hide instability

Throughput may rise while rework loops increase. Pull requests merge faster, yet a growing proportion require post-merge corrections or rapid follow-up changes. Review cycles compress as engineers rely more heavily on generated summaries and passing tests. The surface signal is acceleration; the underlying pattern may be accumulating entropy.

Adoption can hide stagntation

AI usage metrics increase — prompt counts, generation frequency, tool penetration — yet measurable improvement in meaningful outcomes may plateau. Automation becomes pervasive without producing proportional gains in delivery quality or architectural coherence. Activity is visible; leverage is not.

Quality signals can hide governance erosion

Code passes tests. Static checks remain green. Review approvals are recorded. Yet the concentration of change within authority-bearing surfaces may increase. Structural coupling may deepen. Blast radius may expand without corresponding increases in independent scrutiny. Familiar quality signals retain their form while their interpretive meaning shifts.

These distortions do not imply failure. They reflect a change in how work is produced.

Under agentic workflows, performance cannot be reliably inferred from visible output alone. It must be evaluated relative to stability and governance posture. Acceleration that outpaces oversight creates a gap between what is delivered and what is understood.

In this context, performance must be reframed from activity to risk-adjusted output. The central assessment question becomes: how much acceleration can a team safely manage?

3. The Data Science Analogy

The assessment distortion emerging in agentic engineering resembles a well-known challenge in evaluating data science teams.

Data scientists typically operate in environments characterized by high tool interaction, sparse visible artifacts, and large variance in impact. A data scientist may execute hundreds of exploratory runs, adjust features iteratively, and discard numerous intermediate models, yet produce only a small number of visible outputs. The measurable artifact (a model, a dashboard, a report) represents the end state of a long sequence of invisible decisions.

As a result, performance cannot be inferred from superficial activity signals. Lines of code written, notebook cells executed, or hours spent in experimentation are weak proxies for value. Instead, leverage resides in upstream activities: problem framing, feature selection, hypothesis discipline, error containment, and the capacity to avoid unproductive paths. The contribution profile is highly non-linear. Small conceptual decisions can have disproportionate downstream consequences, while high volumes of activity may produce little lasting impact. Evaluation therefore shifts from measuring mechanical output to assessing the quality of decision-making under uncertainty.

Agentic engineering increasingly exhibits similar characteristics.

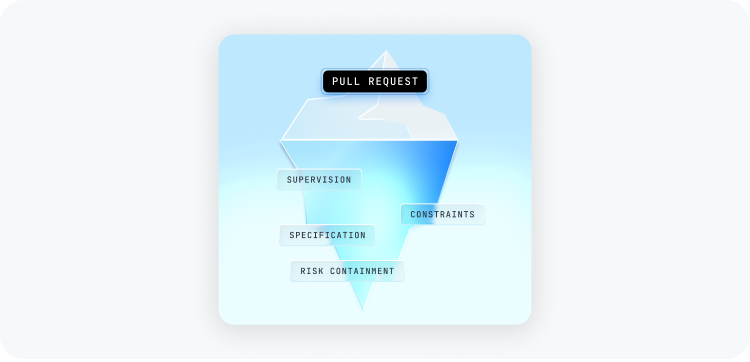

As AI systems assume more of the mechanical work of implementation, engineers spend less time constructing systems from first principles and more time shaping intent, defining constraints, supervising generation, and resolving exceptions. Code becomes, in many cases, the artifact of orchestration rather than direct authorship.

This shift introduces a second-order assessment problem: tacit system knowledge becomes harder to observe and harder to account for within performance and governance frameworks.

In traditional development environments, engineers accumulated architectural intuition through sustained immersion in specific subsystems. Although tacit knowledge was never directly measurable, it was indirectly visible through patterns of engagement; depth of contribution, continuity of ownership, and involvement in review and incident response. Interaction served as a rough proxy for understanding.

As mechanical interaction decreases, that proxy weakens. Engineers may traverse more of the system while spending less time constructing any single part of it. Engagement becomes broader but shallower. Tacit knowledge may still develop, but under different conditions and with fewer observable signals.

This dynamic parallels the data science problem. In both cases, the most consequential work occurs upstream of visible artifacts. In both cases, traditional activity signals become unreliable indicators of capability. The measurement distortion is not that output disappears, but that output no longer reflects where leverage and understanding actually reside.

In this setting:

- Engineers shape constraints rather than write every line.

- They supervise generation rather than construct incrementally.

- They intervene selectively rather than continuously.

- They allocate trust across tools and workflows rather than execute each step manually.

As implementation becomes partially automated, performance variance shifts upstream. It resides in specification clarity, supervision depth, escalation timing, and risk containment discipline. The visible artifact, such as the merged pull request, may conceal substantial invisible iteration and judgment.

The analogy, however, has limits.

Data science is typically exploratory and probabilistic; agentic engineering is operational and cumulative. Data science outputs are often bounded experiments; engineering changes integrate into long-lived systems with ongoing dependencies and governance obligations. The risk profile of engineering work is therefore structurally higher. A flawed model can be retrained; a flawed system change may accrete dependencies and become economically irreversible.

The analogy is therefore not about domain equivalence, but about measurement structure.

In both domains, observable output becomes a weak proxy for leverage when upstream reasoning and tool mediation dominate the production process. In both, the most consequential contributions occur in how constraints are defined and how risk is contained rather than in the mechanical act of construction.

For engineering leadership, this reframes the central assessment challenge. The question is no longer whether AI produces “good code,” nor whether engineers are active, but whether the organisation can still observe and evaluate leverage under conditions of mediated production.

If engineering work increasingly consists of high-leverage inputs applied to generative systems, then measurement must shift accordingly. It must capture not only what is produced, but how safely and sustainably acceleration is absorbed by the organisation.

In this sense, the data science analogy provides a frame of reference: performance becomes less about visible effort and more about calibrated amplification. The task of assessment is to distinguish raw acceleration from controlled acceleration.

That distinction motivates the leverage model introduced in the next section.

4. Measuring Controlled Acceleration: The Leverage Model

If output-centric metrics drift under agentic development, what replaces them?

The answer is not a new single KPI. The failure mode is structural; the response must be layered.

The core insight is that engineering leverage under automation has three distinct dimensions. Acceleration alone is not leverage. Acceleration must be evaluated against stability and governance integrity.

Milestone’s Engineer Automation Leverage Index (EALI) formalizes this leverage model and serves as the anchor metric in our next-generation assessment framework for agentic engineering.

EALI is a composite index consisting of three components:

Each component is normalized relative to the team’s historical baseline and scaled to a common range. The final index is computed as a weighted aggregation of these three dimensions, reflecting the structural requirement that acceleration be sustained by stability and governance discipline.

By design, the weighting framework ensures that increases in raw acceleration cannot fully offset deterioration in stability or governance posture. EALI therefore encodes a simple structural rule: acceleration is leverage only when stability and governance scale with it.

The following sections describe the three dimensions in turn.

4.2 Stability Leverage: Containing Instability Under Speed

Acceleration is only leverage if it does not amplify instability.

In AI-assisted environments, instability often emerges not through obvious failure, but through rework loops, compressed review, and short-horizon corrections. A system can appear productive while accumulating fragility.

Stability Leverage measures whether increased velocity remains clean. It examines patterns such as:

- Post-review change amplification

- Rapid follow-up corrections

- Reversion frequency

- Review strain relative to baseline

The key dynamic is divergence: when change complexity rises while scrutiny remains flat, governance debt accumulates.

Stability Leverage therefore captures whether acceleration is being absorbed without increasing rework pressure or thinning oversight. It is a signal of structural resilience under speed.

4.3 Risk Discipline: Preserving Control Boundaries

Even clean acceleration can erode governance if it concentrates change within sensitive areas of the system.

Agentic workflows amplify individual leverage. Engineers, assisted by AI, can modify deployment configurations, permission boundaries, orchestration logic, and structurally critical subsystems with far less friction than before. The consequence is not only increased speed, but expanded potential blast radius.

Risk Discipline measures whether this amplified leverage is altering the organisation’s control surface. It evaluates patterns such as:

- Incident amplification per unit of structural change

- Concentration of modification within authority-bearing surfaces

- Exposure of historically fragile segments

- Degradation of assurance signals under velocity

Crucially, Risk Discipline does not penalize change within sensitive areas in isolation. It evaluates deviation from calibrated exposure. The question is not whether critical surfaces are touched, but whether they are touched disproportionately as acceleration increases.

Risk Discipline therefore functions as the structural safeguard within the leverage model, ensuring that gains in velocity do not outpace governance capacity.

4.4 From Dimensions to Index

EALI aggregates these three dimensions into a normalized composite score. Each component is evaluated relative to historical baselines to prevent cross-team distortion and to detect directional drift rather than absolute magnitude.

The aggregation structure ensures that deterioration in stability or governance reduces overall leverage, even when delivery velocity increases. The index therefore reflects not raw output, but the net balance between acceleration and control.

By integrating delivery, stability, and risk discipline into a single measure, EALI provides a system-level view of how safely acceleration is being absorbed.

5. Grounding in Observable Telemetry

A leverage model is only meaningful if it is measurable without expanding surveillance or relying on speculative interpretation.

EALI is grounded entirely in observable engineering telemetry:

- Pull request flow

- Review dynamics

- Risk-weighted structural change

- Incident linkage

- Control-surface exposure

It does not require prompt logging, keystroke tracking, or semantic inspection of model reasoning. The objective is not to instrument thought, but to observe system behavior.

By anchoring the index in repository and workflow signals, EALI remains auditable, reproducible, and aligned with existing governance frameworks. It measures how work manifests in the system, not how engineers internally reason about it.

This grounding is essential. Without it, leverage becomes a theoretical construct. With it, controlled acceleration becomes observable.

6. From Metrics to Managed Acceleration

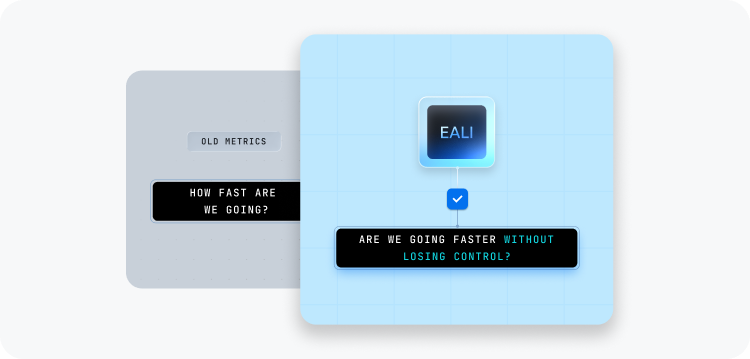

In the prior paper, we argued that software engineering analytics must evolve from passive reporting to investigative control. EALI extends that argument into assessment.

Traditional KPIs answer: How fast are we moving?

EALI answers: Are we moving faster without losing control?

It provides leadership with:

- Visibility into concentrated control-surface exposure

- Evidence that governance scales with automation

This distinction matters. When velocity increases under automation, leadership must determine whether stability and governance scale proportionally. EALI provides early warning when delivery acceleration begins to outpace review depth, risk containment, or control-surface discipline.

It reframes performance measurement from activity monitoring to leverage evaluation. Acceleration is no longer interpreted in isolation, but in relation to its systemic consequences.

In this sense, EALI is not a replacement for existing delivery metrics. It is a governance-aware overlay that restores interpretability as development models evolve.

7. Conclusion

AI-assisted development is no longer an experimental phase. It is becoming the operating model of modern engineering organisations.

The central assessment challenge is not whether AI increases productivity. It is whether organisations can still observe, reason about, and govern how their systems evolve when implementation is increasingly mediated by automation.

Milestone’s Engineer Automation Leverage Index operationalizes this shift. By integrating delivery acceleration, stability containment, and governance discipline into a single framework, it measures how much acceleration a team can safely manage.

As engineering becomes increasingly agentic, low-resolution activity metrics lose interpretive authority. Code volume, pull request counts, and tool usage statistics remain observable, but their meaning weakens as reasoning, authorship, and control become distributed across humans and generative systems.

In this environment, performance cannot be inferred from speed alone. It must be understood through structured modelling of system behavior — integrating acceleration, stability, and governance signals into a coherent representation of how change propagates and how risk accumulates.

EALI is one such construct. It demonstrates how delivery velocity, rework containment, and control-surface discipline can be integrated into a unified model of controlled acceleration. It is not the final word on measurement, but an exemplar of the kind of metric architecture required in the agentic era.

As development models continue to evolve, additional constructs will emerge — capturing specification quality, intervention latency, correction-loop depth, and other signals meaningful only under mediated production. The broader lesson is not the primacy of any single index, but the necessity of moving from activity metrics to system-aware modelling.

In the agentic era, performance evaluation must mature alongside production models. The task is no longer to count effort, but to understand amplification — how much capability is being generated, how safely it is absorbed, and how deliberately it is governed.