What are the key aspects of engineering oversight?

Status

answered

Status

answered

Engineering oversight is often framed incorrectly; some teams interpret the term as approvals, reporting layers, and senior people checking work after the fact. That usually adds friction without improving the system.

The useful version is simpler. It helps a team stay technically sound, honest about risk, and stable enough to keep shipping without building hidden problems into the work.

In software teams, engineering oversight isn’t about tracking every task. It is about keeping a clear view of the decisions that shape reliability, delivery, and long-term maintenance. That includes architecture choices, production readiness, ownership gaps, security risks, and the quality bar being held under pressure.

This usually sits with leads, staff engineers, engineering managers, or principals. Although the titles may vary, the real requirement is that someone can see both the code-level reality and the broader pattern across releases and teams.

That is where engineering leadership oversight differs from day-to-day coordination. It is less about tracking activity and more about checking whether the team is building in a way that will still hold up later.

Oversight gets weak when senior engineers only step in during outages or major planning cycles. By then, most of the damage is already in place. You see it in rushed service boundaries, poor migration plans, or test suites that pass while missing real failure paths.

The better model is regular involvement without constant interruption. A design gets reviewed before implementation hardens. A slipping sprint triggers a technical conversation, not just schedule pressure. A recurring issue across teams is noticed early, rather than becoming the norm.

Software engineering oversight works best when it is part of delivery, not a final checkpoint.

A few signs that it is working:

That is not a heavy process. It is just useful visibility.

A team can look busy and still make weak technical decisions. Full sprint boards and regular releases do not say much on their own. Oversight should help distinguish motion from sound judgment.

One common problem is local optimization. A team solves its own issue in a way that pushes cost into another service or another group. Another is shallow reuse. A shared component spreads quickly, but no one has checked whether it is easy to use, secure, or properly owned.

Not every decision needs formal review. Some do need sharper questions. Why this dependency? Why is rollback difficult? Why is a low-maturity service now on a critical path? Those questions are rarely theoretical; they shape incident frequency and maintenance load.

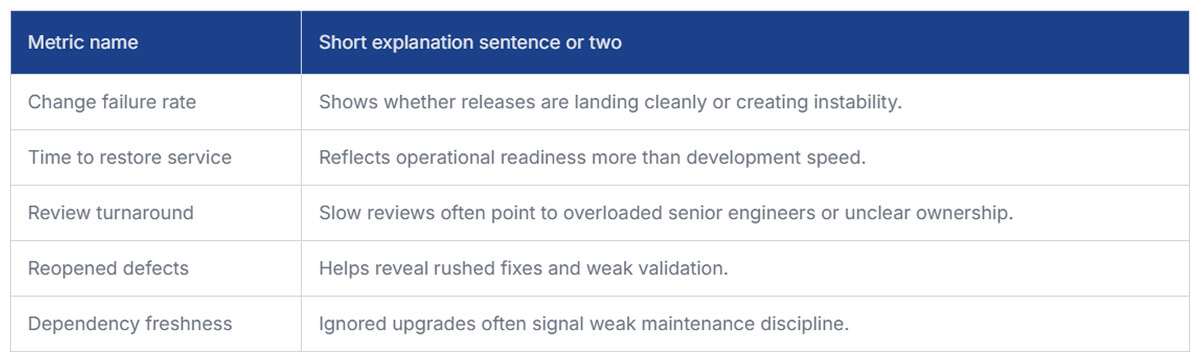

Oversight becomes vague when it relies solely on instinct. Teams usually need a small set of signals that expose risk without turning into reporting theater.

No metric should stand alone. The point is not to punish a number, but rather to identify where more attention is needed.

Most failures in oversight are not dramatic. They build slowly. A team keeps shipping, leadership assumes things are under control, and the warning signs get normalized because no single problem looks big enough on its own.

A few patterns show up repeatedly:

None of these seems unusual in a single sprint. Over a few quarters, they turn into missed forecasts, brittle services, and cleanup work nobody planned for.

Most teams already have standards. The harder question is whether those standards still hold when deadlines tighten. Oversight should check what survives normal delivery, not what looks good on paper.

That usually comes down to a few practical areas: review quality, testing on critical paths, clear service ownership, and security checks that fit the release workflow rather than disrupting it at random.

Strong engineering oversight also leaves room for exceptions. Teams will sometimes take shortcuts. The problem isn’t that it happens, but that nobody names the tradeoff, captures the risk, or follows through later.

The key aspects of engineering oversight are fairly plain-good visibility, stronger decision review, realistic standards, and early attention to risk. When engineering leadership oversight is working, the team usually feels less drama, not more. Things break less often, tradeoffs are clearer, and the system remains easier to live with over time.