Most teams experimenting with AI agents run into the same issue after the first demo. A single agent can look useful in isolation, but real systems rarely stay that simple. Once you add tools, state, routing, retries, and human review, the design starts to matter more than the model.

That is where AI agent frameworks become useful. They do not make the hard parts disappear, but they give teams a structured way to manage agent behavior, connect external systems, and keep the workflow from turning into a pile of brittle prompt logic.

Why teams use them

Many early agent implementations start as a thin wrapper around an LLM call. That works for small experiments, especially when the task is linear and the tool surface is narrow. The trouble starts when the agent needs to plan, call multiple tools, recover from errors, or coordinate with another agent.

An agent may choose the wrong tool. It may loop. It may call the right tool with the wrong arguments. It may produce a good answer once and a bad one the next time because the surrounding workflow is too loose. Good frameworks help reduce that drift.

What actually matters in practice

When engineers compare AI agent frameworks, or even broader categories people refer to as AI agent frameworks, the marketing language is usually less useful than the runtime behavior. The real question is not whether a framework supports agents. Most of them do. The better question is whether it helps you build something you can observe, debug, and change without rewriting the whole stack.

A practical framework usually needs a few things:

- Tool execution model: Clear control over how tools are called, validated, and retried.

- State handling: A reliable way to carry context across steps without stuffing everything into the prompt.

- Workflow control: Support for branching, handoffs, approval steps, or multi-step execution.

- Observability: Traces, logs, and intermediate reasoning artifacts that help during debugging.

- Integration surface: Straightforward hooks for APIs, databases, queues, and internal services.

These are not flashy features, but they are the difference between a demo agent and something a team can run inside a real product.

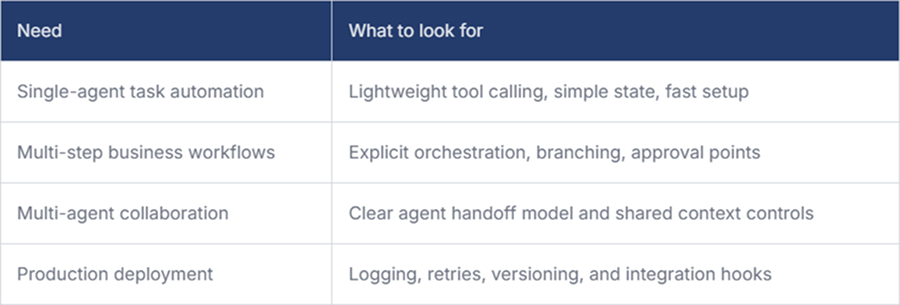

Framework choice depends on the workload

Not every agent system needs the same shape. A support triage workflow is different from a code review assistant. A document analysis pipeline is different again. This is why teams often over complicate the choice by looking for the best framework in general rather than the best fit for a specific execution pattern.

That last row is often underestimated. Many AI agent frameworks look strong in notebooks but start to show their limits once deployed behind real APIs, queues, and user-facing latency constraints.

Open source matters for agent systems

Many teams prefer an open-source AI agent framework because it gives them greater visibility into how execution, state, and tooling actually work. They are part of the architecture. Agent systems often require custom tool adapters, internal policy checks, domain-specific memory, and workflow rules that do not neatly fit within a closed abstraction.

Open source also makes it easier to inspect how state is passed, how tools are executed, and how orchestration is implemented under the hood. That matters when a production system misbehaves, and the team needs more than a nice dashboard. They need to understand the execution path.

Orchestration is where complexity shows up

The more serious the use case becomes, the more the discussion shifts from prompting to AI agent orchestration. That is usually the point where developers stop asking, “Can the model do this?” and start asking, “How do we make this reliable across many runs?”

Orchestration covers the messy but important parts, such as which agent gets control first, when a tool call should be blocked, how a failed step is retried, when a human must approve the next action, and how context is reduced so the workflow does not become slower and noisier with every turn.

In simple systems, orchestration can stay implicit. In larger systems, implicit orchestration can lead to bugs. One agent overwrites the context of another. A retry causes duplicate work. A planner keeps expanding a task that should have been terminated early. These are workflow problems more than model problems, and the framework should help make them visible.

Where teams get stuck

A common mistake is choosing a framework based on how quickly it produces a compelling demo. That is understandable, but it tends to hide the real engineering burden.

The harder questions usually appear later. Can the agent be tested with stable fixtures? Can the tool layer be validated before execution? Can engineers replay a failed run? Can teams see whether adoption is improving workflows or just adding noise? This is also where platforms like Milestone help, by giving leaders clearer visibility into engineering workflows, GenAI adoption, and ROI without turning measurement into a separate project.

This is also why comparing AI agent frameworks only by feature count is not very helpful. A smaller framework with better control over execution may be a safer choice than a broader one that hides too much behavior behind convenience abstractions.

Final Thoughts

AI agent frameworks are useful when they reduce operational uncertainty, not when they simply wrap an LLM in more abstractions. The best choice usually comes from the workflow, the failure modes, and the level of control your team needs.

If the agent is going to live beyond a prototype, the framework should help you reason about execution, not just generate it.