Multi-agent systems are not a new idea, but they have become much more practical with modern language models. Instead of asking one model to do everything, teams now split work across several agents with different responsibilities. One agent may plan, another may retrieve data, and another may verify whether the output is actually usable.

That setup sounds simple on paper. In real-world systems, it only works when the boundaries are clear and the handoffs are controlled.

What Multi-Agent Systems Actually Mean

A multi-agent system is a software architecture in which multiple agents collaborate to complete a task that would be harder, slower, or less reliable for a single agent. Those agents may share context, call tools, pass messages, or work in sequence, depending on the workflow.

In older software systems, this pattern looked like distributed services with defined roles. In current AI products, a multi-agent AI system typically consists of several model-driven components that coordinate toward a goal. One agent may classify the request, another may search internal knowledge, and another may produce the final response in the correct format.

The important part is not the number of agents. It is the division of responsibility.

Why Teams Use Them

A single-agent workflow often starts failing in familiar ways. The model mixes planning with execution, forgets earlier constraints, or tries to answer before it has enough information. Once the task involves retrieval, validation, formatting, and tool use, a general-purpose agent can get messy fast.

Multi-agent systems help reduce cognitive overload. Each agent can stay focused on one part of the job.

A common pattern looks like this:

- Router agent: Decides what kind of request came in and where it should go.

- Research agent: Pulls facts from documentation, APIs, or internal sources.

- Execution agent: Performs the main task, such as drafting, summarizing, or generating code.

- Review agent: Checks quality, policy, formatting, or factual consistency.

This does not automatically improve quality. It just makes the system easier to reason about when things go wrong.

Where They Work Well

The strongest use cases usually have some combination of branching logic, tool access, and verification. That is where a multi-agent system earns its complexity.

For example, in a compliance assistant, one agent can identify the regulation being asked about, another can fetch the relevant clauses, and a third can draft a risk summary. In a developer support tool, one agent can inspect logs, another can search docs, and another can suggest a fix with the appropriate level of confidence.

These systems also fit longer workflows where the output needs checkpoints. A single response may look smooth, but in production, smooth is less important than traceable.

How to Build Multi-Agent Systems Without Making a Mess

If you are figuring out how to build multi-agent systems, start with two questions: what should each agent be allowed to do, and what should it never do?

That sounds basic, but most failures begin there. Agents overlap, repeat work, or step on each other because nobody has clearly defined ownership.

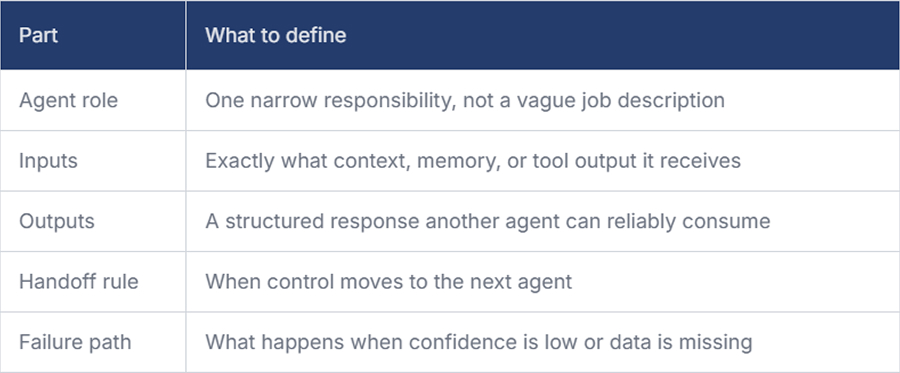

A practical design checklist usually includes the following:

Once that is clear, keep the orchestration thin. The controller should coordinate work, not hide it. If orchestration logic becomes too clever, debugging gets painful.

You also need to decide whether agents collaborate sequentially or in parallel. Sequential flows are easier to inspect. Parallel flows are faster, but they create merging problems. If two agents return slightly different interpretations of the same issue, something has to reconcile them.

The Real Problems Show Up Later

The first version of a multi-agent AI system often looks impressive in demos. The trouble starts when the input gets noisy.

An agent may pass along too much context. Another may assume that a tool’s result is reliable even when it is incomplete. Small mismatches turn into expensive failures because each agent trusts the previous one a bit too much. This is why strong schemas, validation, and logging matter more than clever prompts.

A few issues show up often:

- Context drift: Agents inherit irrelevant details and start optimizing for the wrong thing.

- Handoff ambiguity: One agent thinks a task is complete, while the next assumes more work is needed.

- Tool misuse: Agents call tools too early, too often, or without checking the quality of the results.

- Review gaps: Validation agents approve outputs that match format but miss substance.

That is why evaluation should happen at the workflow level, not only per agent. A strong individual agent can still produce a weak overall system.

Conclusion

Multi-agent systems are useful when the work naturally breaks into separate responsibilities. They are not a shortcut to better reasoning, and they do not fix weak architecture. They just give you more control over how complex AI workflows are shaped.

If you want to learn how to build multi-agent systems well, the practical answer is to stay narrow at first. Define clear roles, keep handoffs explicit, and make every stage inspec table. That is usually what separates a working system from one that only looks good in a demo.