Abstract

Token usage is emerging as the first widely available metric of AI-mediated software development. It is precise, universally measurable, and directly tied to cost. As a result, it is increasingly interpreted as a proxy for AI-driven productivity.

However, token counts measure input, not output. They reflect how much interaction occurs with generative systems, not what that interaction produces.

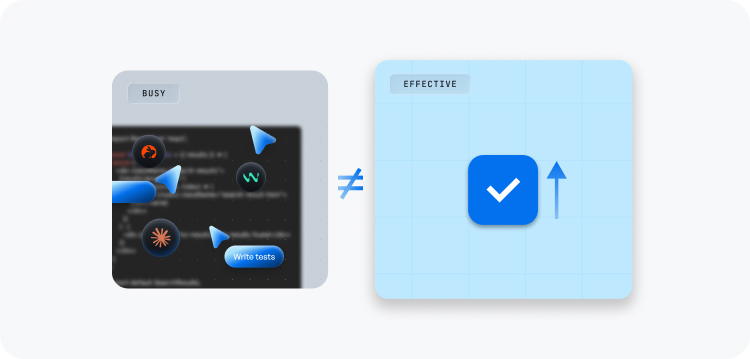

This paper describes the resulting pattern as token theatre, where visible activity in the generative layer creates a compelling but often misleading signal of progress.

This paper argues that such interpretations repeat a familiar mistake. Like lines of code and other activity-based metrics before it, token usage captures interaction rather than impact. It provides visibility into the generative layer of development, but does not resolve whether that activity produces meaningful, stable, or governed change.

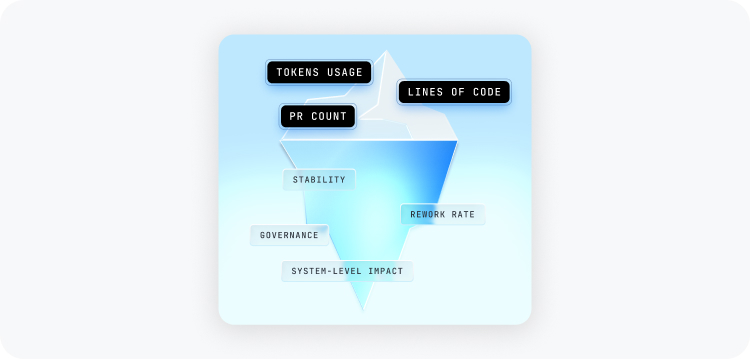

We introduce a simple structural distinction between first-order and second-order metrics. First-order metrics capture observable activity. Second-order metrics capture leverage, that is, how effectively activity translates into outcomes under constraints. Token usage is a first-order metric. Productivity is a second-order property.

The core failure mode emerging in AI-assisted development is the misinterpretation of first-order signals as second-order indicators. This paper reframes tokens as the cost of outsourced cognition and argues that meaningful assessment must evaluate how that cost converts into controlled acceleration.

1. The Return of a Familiar Error

Software engineering has a recurring measurement challenge. When a signal is easy to observe, it often becomes a proxy for value, even when the relationship is weak or indirect.

Lines of code, pull request counts, and cycle time all began as useful visibility tools. Over time, each was used as an indicator of productivity. In practice, this led to a familiar pattern. The metric itself remained stable, but its meaning drifted. Increases in output did not always correspond to improvements in quality, stability, or system understanding.

AI-assisted development introduces a new and unusually precise signal: token usage. Every interaction with a generative model is measured, and every request and response contributes to a quantifiable cost surface. For the first time, organisations can observe the scale of machine-mediated reasoning involved in software production.

At a high level, this can be understood as a form of token theatre, where visible activity begins to stand in for demonstrated progress. The effect is amplified because token counts measure input into the system, not the quality or impact of the resulting output. Tokens are comparable and tied to cost, which makes them feel like a direct view of AI contribution. In practice, they reflect activity in the generative layer rather than the value of the resulting change.

The challenge is not that token metrics are incorrect. It is that they are easy to over-interpret as indicators of productivity.

2. First-Order and Second-Order Metrics

It is useful to distinguish between two types of metrics that operate at different levels.

First-order metrics capture observable activity:

- Lines of code

- Pull request volume

- Token usage

- Prompt frequency

These signals represent what occurred within the system. They are typically straightforward to collect and compare. Many analytics products focus on presenting these data clearly, which reinforces their perceived usefulness.

Second-order metrics capture leverage

- stability of change under acceleration

- relationship between output and rework

- preservation of governance under velocity

These signals indicate what that activity produces, particularly when considered in the context of system constraints.

The distinction is structural. Activity is directly observable, but leverage must be inferred. It depends on how activity propagates through the system, how it interacts with existing structures, and whether it results in durable, reliable outcomes.

In practice, problems arise when first-order signals are interpreted as second-order indicators. When token usage is treated as a proxy for productivity, activity is used as a stand-in for outcome.

3. Why Tokens Appear to Measure Productivity

Token metrics have several characteristics that make them appear useful. Each is reasonable in isolation, but together they create a strong, misleading sense that token usage should map to productivity.

First, tokens are precise and consistently counted, which makes them easy to compare.

Second, they are universal. Any interaction with a language model can be expressed in tokens, regardless of tool or workflow.

Third, they are economically grounded. Tokens map directly to cost.

Finally, they are upstream. Tokens capture activity in the reasoning process rather than in resulting artifacts.

It is also useful to be precise about what a token represents. In common discourse, tokens are often treated as a unit of intelligence or reasoning. In practice, they are a unit of text decomposition, the way a model segments and processes input and output. Token counts therefore reflect how much text is being generated and processed, not how much understanding or insight is being applied.

Taken together, these properties encourage a natural inference: if tokens measure AI involvement, and AI contributes to productivity, then token usage should signal productivity.

However, this inference does not hold in practice.

Tokens measure the intensity of interaction with a generative system, not the effectiveness of that interaction. They do not capture decision quality, code stability, or downstream impact.

As a result, high token usage can be associated with trivial, redundant, or unstable outputs. Conversely, relatively small amounts of well-directed interaction can produce significant impact. The reverse can also occur. There is no stable, predictive relationship between token usage and outcome.

Token metrics describe activity in a way that is unusually clear and compelling. This clarity makes them persuasive, but not sufficient. Precision does not necessarily imply relevance, and cost does not necessarily imply value.

A simple example illustrates the point. A team adopts an AI coding assistant and sees a rapid increase in token usage and pull request throughput. On the surface, this appears to indicate improved productivity. However, over time, the team also observes higher rates of follow-up fixes, increased review load, and a growing need to revisit recently merged changes. In this case, token usage accurately reflects increased activity, but it does not reflect whether that activity is producing stable, high-quality outcomes. Without additional signals, the increase in tokens can be misread as progress rather than a change in the structure of work.

4. Tokenmaxing as a Behavioural Pattern

As token metrics become more visible, optimisation naturally shifts toward what can be measured. This introduces a set of reinforcing effects that shape both behaviour and judgement.

At a practical level, teams begin to optimise interaction with the model itself. This can take the form of prompt churn, redundant generation cycles, or over-decomposition of tasks into multiple AI-assisted steps. These behaviours increase token usage, but do not necessarily improve outcomes.

At the same time, the relationship between effort, understanding, and output becomes less direct. Engineers can produce larger volumes of change with less direct engagement in the underlying reasoning. Large volumes of generated code, or where this is less directly observable, rising token counts, can give the impression of progress even when structure, coherence, or long-term stability are unclear. In these cases, token counts act as a proxy for activity rather than quality.

A related pattern is the assumption that imperfections in generated output are temporary, and will be corrected by future iterations of the same systems. This shifts how quality is perceived in the present. If issues are expected to be resolved later, the immediate cost of low-quality output appears lower, and the discipline applied during development can weaken.

Taken together, these effects can produce a form of token theatre, where visible activity substitutes for demonstrated progress. Optimising for token usage can create a false sense of optimism about the resulting engineering.

This can also create a feedback loop in which visibility reinforces interpretation. Elevated token usage can give a managerial “vibe” of progress, even when it reflects inefficient iteration, prompt churn, or difficulty converging on a correct solution.

This pattern is not new. Similar dynamics have been observed with earlier metrics. When lines of code were used as a signal of performance, more code was often produced. When throughput was emphasised, engineering practices often shifted to optimise for speed, sometimes at the expense of stability. More broadly, the discipline has repeatedly adapted to these forms of measurement pressure, often with unintended consequences.

Token usage introduces a comparable dynamic. When it is interpreted as a measure of productivity, optimisation shifts toward interaction with the model rather than the outcomes that interaction produces. Token metrics therefore provide visibility into activity, but not, on their own, a reliable view of impact.

5. Tokens as the Cost of Outsourced Cognition

The preceding sections suggest a different way to interpret token usage. The underlying problem is a confusion between input and output measures. Rather than treating tokens as a measure of output, they should be treated as inputs whose path to outcomes must be validated.

Inputs do lead to outputs, but the pathway between them must be validated. Token usage can therefore be informative when considered alongside evidence of outcome quality, stability, and governance. High token counts, in isolation, do not carry a clear interpretation. They become meaningful when evaluated alongside evidence of outcome quality, stability, and governance, and when the surrounding analysis does not raise concerns about the resulting engineering.

AI systems externalise portions of the reasoning process. Tasks that previously required direct human cognitive effort are now carried out through interaction with generative models. Tokens are the unit by which this interaction is processed and billed.

In this sense, token usage represents the cost of machine-mediated input into the engineering process. It reflects how much text is being generated, processed, and iterated on, rather than the quality of the resulting outcome.

This reframing helps resolve a common confusion. Token usage reflects the scale of input into the system, not the properties of the resulting output. Whether that input produces value depends on how it is directed and how its outputs are integrated into the broader system.

This aligns token metrics with familiar concepts in other domains. Compute cost, for example, does not measure system performance. It measures the resources consumed to achieve it. Performance depends on how effectively those resources are applied.

Similarly, tokens measure the scale of input into a generative process. Productivity emerges from how that input is translated into stable, coherent, and well-governed change.

6. From Activity to Leverage

If tokens measure input, then their meaning cannot be inferred directly. It must be interpreted.

This introduces a shift in how measurement itself is understood. Metrics are not simply read from activity signals. They are constructed as models over those signals.

In traditional settings, activity and outcome were more tightly coupled, which allowed simple metrics to function as rough proxies for performance. In AI-assisted environments, that coupling weakens. Signals such as token usage, pull request volume, or cycle time continue to move, but their relationship to outcome becomes less direct.

As a result, measurement becomes a reasoning problem. Signals are proxy-based and often conflicting. Determining what they mean requires integrating them within a structured model that accounts for stability, rework, and system-level impact.

In this context, token usage is one input among many. On its own, it provides a view into interaction with AI systems. To become meaningful, it must be evaluated within a model that relates this activity to stability, rework, and system-level change.

This is the distinction between activity and leverage. Activity can be observed. Leverage must be inferred. More broadly, this reflects a shift from measuring activity to governing how signals are interpreted into decisions and change.

One way to formalise this distinction is through the concept of controlled acceleration. In our prior work, this is expressed as a model that integrates multiple dimensions of system behaviour. Rather than treating any single signal as definitive, it models how delivery, stability, and governance interact under increasing levels of automation. In broader terms, this reflects an approach where signals are combined into structured models that evaluate how change propagates and is absorbed across the system.

The implication is that token usage is an input into a broader analytical process. Its value lies in how it is interpreted within a model, not in what it measures directly.

7. Implications for Measurement

The shift from activity to model-based interpretation has practical consequences.

In many organisations, this is already visible. There is increasing pressure to relate token expenditure to the value of the resulting output. This reflects a recognition that increased input, whether human or AI-mediated, does not automatically produce better engineering outcomes.

In more mature organisations, token usage is no longer treated as a standalone signal, but as one input within a broader evaluation process. It functions as a multi-purpose signal, indicating activity and apparent progress while also reflecting cost and, in some cases, the presence of rework in the development process. Its meaning depends on how it correlates with indicators of stability, rework, and system-level impact.

This also changes how engineering work is assessed. Evaluation moves away from measuring activity and toward understanding how that activity is absorbed by the system. Questions shift from “how much was produced” to “what was produced, and how safely.”

In this setting, measurement becomes less about selecting the right metric and more about constructing the right model. Signals are combined, constrained, and interpreted in context, rather than read in isolation.

The result is a more demanding, but more accurate, form of assessment. It reflects not just what is visible, but what is happening within the system as a whole.

Conclusion

This paper describes that failure mode as token theatre. Visible interaction creates a compelling signal of progress, even when the underlying engineering is uncertain.

The response is not to discard token metrics, but to interpret them correctly. Token usage is informative when treated as one input among many and evaluated against evidence of stability, rework, and system-level impact.

This requires a shift in how measurement is approached. Metrics are not read from activity. They are constructed as models that resolve what that activity means.

The question is not how many tokens were consumed, but what they produced.

Token metrics do not resolve the measurement problem. They make its limits explicit.