Measuring performance in software teams looks simple from a distance. Delivery speed, bug counts, pull requests, and on-call incidents-there’s always another metric to check. The hard part is deciding what those numbers actually mean in the middle of real engineering work.

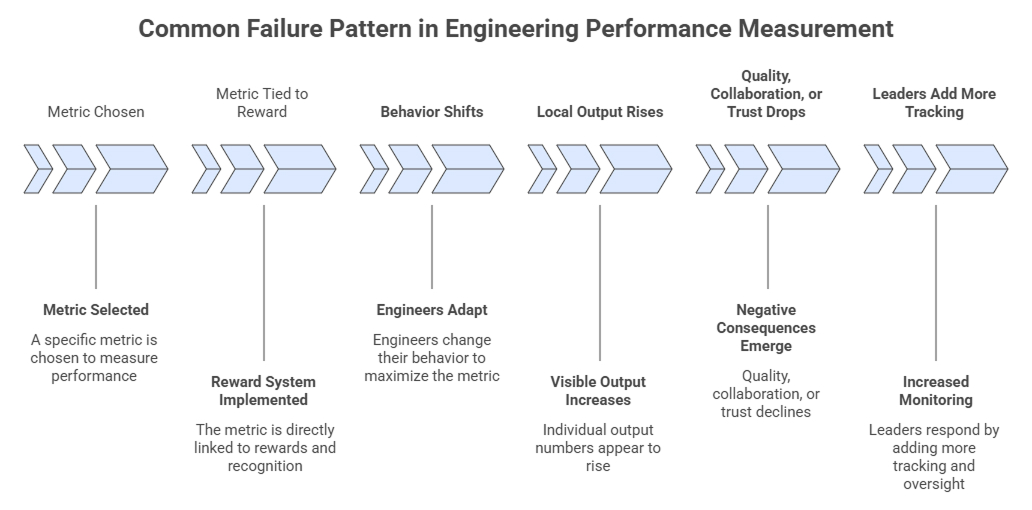

Most teams do not struggle because they have no data. They struggle because poor measurement changes behavior faster than it creates clarity. A system meant to improve engineering team performance can quietly damage trust, create defensive habits, and push people toward work that looks visible rather than work that matters.

Why Traditional Performance Measurement Often Backfires

Many performance systems fail for the same reason. They reduce messy teamwork into individual output numbers and treat those numbers as the objective truth.

That sounds efficient, but engineering rarely works that way. One engineer may spend a week removing a risky dependency, untangling a migration path, or helping two other teams unblock a release. Another may close fifteen tickets in the same period. If a manager looks only at visible output, the second person appears stronger even when the first created more long-term value.

The damage is not always dramatic. It usually shows up as quieter signals:

- engineers optimizing for activity instead of outcomes

- less willingness to pair, mentor, or review carefully

- friction between teams when local metrics beat shared goals

- managers treating context as an excuse instead of part of the job

When these occur, the numbers still appear clean. The team does not.

What Healthy Engineering Team Performance Actually Looks Like

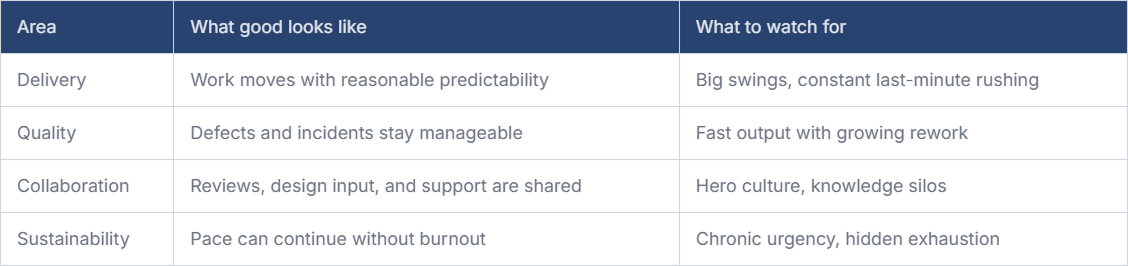

Strong teams tend to look more steady than impressive. They deliver work with reasonable predictability, recover from problems without chaos, and maintain technical quality under pressure. They also make space for unglamorous work such as review, design alignment, documentation, and incident learning.

Healthy performance is better understood as a combination of delivery, quality, collaboration, and sustainability. If one of those disappears, the others usually degrade over time.

Delivery, quality, and sustainability need to be judged together, because a team may look fast on a dashboard while actually skipping reviews, testing, or design work. In contrast, truly strong teams pair steady delivery with low defects, good collaboration, shared knowledge, and a pace they can maintain without burnout.

A better mental model looks like this:

Which Engineering Productivity Metrics Matter Most

Most teams do not need more metrics. They need fewer with clearer meaning. Useful engineering productivity metrics should help managers ask better questions. For teams adopting AI tools, Milestone can show whether those changes are improving flow, visibility, and output.

Cycle time is useful because it shows how long work takes from start to finish, and when it starts growing, it usually points to friction worth investigating, such as slow reviews, unclear scope, or growing dependencies. Throughput can also help, especially at the team level, because it shows how much work gets completed over time, but it becomes misleading when different kinds of work are compared directly or when it is used to rank individuals.

Quality signals matter because output without stability is costly; teams should also watch for escaped defects, rollback frequency, incident volume, and rework caused by rushed decisions. Allocation adds another layer of context, since a team spending much of its time on support, incidents, approvals, or other unplanned work may have low feature output for valid reasons. Without that context, managers can end up pushing the wrong fix.

A balanced set might include:

- cycle time

- throughput at the team level

- escaped defects or incident rate

- rework percentage or rollback frequency

- allocation across project work, maintenance, support, and interruptions

- team health signals from retrospectives, turnover risk, or recurring friction

What matters is how these measures are used. Good managers treat metrics as indicators, not verdicts. If cycle time worsens, they ask what changed. If throughput dips, they check whether the team is absorbed in onboarding, architectural work, or operational noise. If quality drops, they look for process strain before assigning blame.

This approach keeps engineering performance metrics grounded in reality. It also reduces the temptation to turn dashboards into scorecards.

How to Approach Engineer Performance Evaluation Without Hurting Morale

Individual evaluation gets sensitive fast. Engineers usually accept feedback. What they resent is vague judgment, selective evidence, or a process that ignores the actual shape of their work.

A fair review should combine observable results, technical judgment, team contribution, and context over time. Too many organizations still lean on snapshot metrics simply because they are easier to export than thoughtful assessments.

Start with role expectations

Performance should be evaluated against the demands of the role. A senior engineer should not be assessed the same way as a new graduate.

For senior and staff engineers, influence often matters more than raw output. Design clarity, incident leadership, mentoring, and cross-team decision making usually carry more weight than ticket volume. For early-career engineers, growth in problem-solving, code quality, follow-through, and learning velocity often matters more.

Make feedback continuous

Managers need recurring feedback loops, not surprise verdicts twice a year. If an engineer learns of a concern for the first time during a formal review, the system has already failed.

Performance conversations should feel familiar before they become documented.

Account for context

Context matters more than many review systems admit. An engineer who spent a month stabilizing a failing service may show fewer visible deliverables than someone working on clean greenfield tasks. Someone helping a new team or spending heavy time onboarding may look less productive in the short term while doing exactly what the organization needs.

Use better review questions

A healthy review discussion usually focuses on a few simple questions:

- What outcomes did this person help create?

- How did they work with others?

- What kinds of problems did they take on?

- Where did they improve?

- Where do they need support?

That produces a much stronger conversation than asking how many pull requests they merged.

Make invisible work visible

Managers should also be explicit about what does not count against people. Time spent mentoring, reducing risk, improving tooling, reviewing deeply, or documenting core systems should not disappear from the record just because it is harder to count. Those activities hold teams together.

Involve engineers in the model

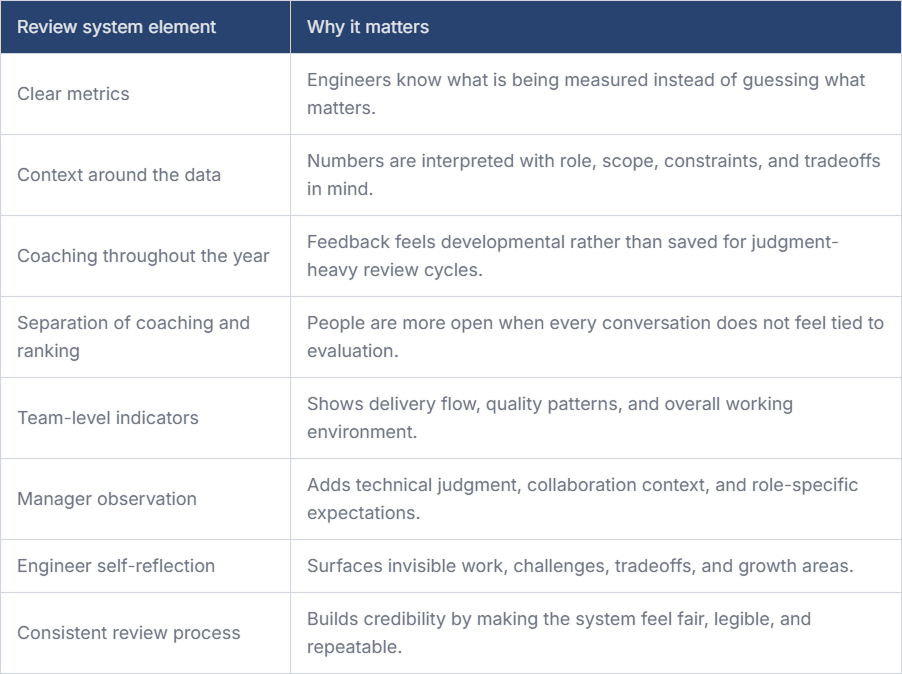

Another practical step is involving engineers in defining the measurement model. Not every metric needs committee approval, but teams should understand why a measure exists, what it does not mean, and how it will be interpreted.

People are far less defensive when they can see that the system reflects the work they actually do.

Building a Review System People Can Trust

Trust does not come from promising that measurement will be painless. It comes from making the system legible and consistent.

That means teams should know which data is being examined, how often, and with what constraints. If managers refer to metrics during reviews, they should also explain the context they considered and the data gaps. Engineers can handle honest nuance better than false precision.

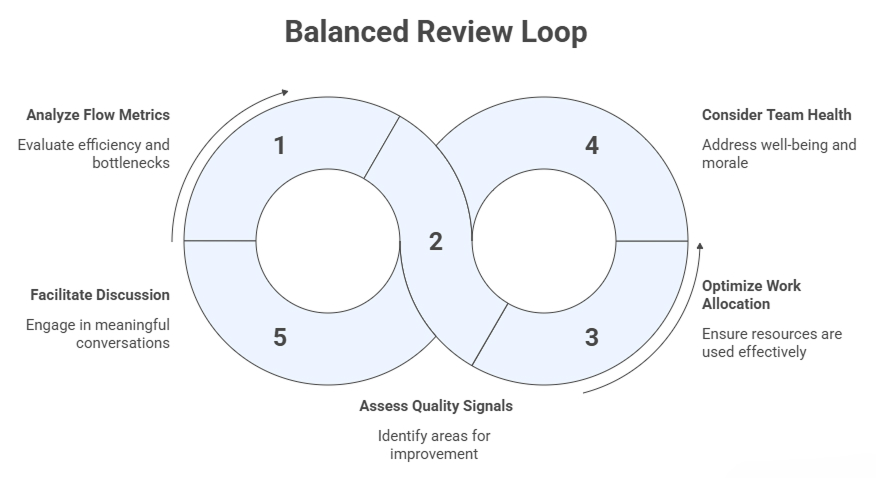

A useful review system also separates coaching from ranking as much as possible. Coaching should happen year-round and focus on better decision-making, habits, and trade-offs. Formal review can still exist, but it should not be the only place where performance is discussed. When every conversation feels tied to judgment, people get careful in the wrong ways.

One practical pattern is to combine three inputs: team-level indicators, manager observation, and self-reflection from the engineer. Team indicators show environment and flow. Manager observation adds technical and organizational context. Self-reflection often reveals invisible work, tradeoffs, and areas where the person already knows they need to improve.

None of this makes the assessment perfectly objective, but it makes it more credible, which is usually the real goal. A review system becomes more credible when it combines transparent inputs, ongoing feedback, and clear interpretation.

Conclusion

The best performance systems do not try to eliminate judgment. They try to make judgment fairer, calmer, and more grounded. If leaders use metrics to understand work rather than flatten it, they make better decisions and build healthier teams. That is usually a stronger result than a cleaner dashboard.

FAQs

1. What metrics actually reflect healthy engineering team performance?

Healthy performance usually shows up through a mix of flow, quality, and sustainability. Look at cycle time, throughput, escaped defects, rollback frequency, work allocation, and signs of team strain. No single metric is enough. The patterns across them tell you whether the team is delivering in a stable, repeatable way.

2. How can managers give performance feedback without hurting morale?

Feedback lands better when it is specific, regular, and tied to observed work rather than vague judgment. Managers should discuss impact, tradeoffs, and growth areas early, not save everything for formal reviews. It also helps to recognize invisible contributions, such as mentoring, risk reduction, and collaborative problem-solving.

3. How do you balance individual vs. team performance in engineering?

Start with team outcomes because software delivery is shared work. Then evaluate individual contribution in that context. A strong engineer may improve architecture, review quality, or cross-team execution without producing the most visible output. The balance works when leaders avoid treating isolated activity as a complete picture of value.

4. What are common mistakes leaders make when evaluating engineers?

The usual mistakes are overvaluing visible output, ignoring work complexity, comparing roles that are unlike, and using metrics as final answers rather than prompts for discussion. Another common problem is surprising people with feedback during formal reviews. That turns evaluation into a threat instead of a useful management practice.

5. How can engineers be involved in defining how their performance is measured?

They should be included early in the process when teams choose metrics and review criteria. Engineers can point out where measures are easy to game or where important work is being missed. Involvement also builds trust because people understand the system’s purpose, limits, and trade-offs before it affects evaluations.