AI tooling is no longer experimental. Most teams already depend on it in their daily workflows, though that dependence remains uneven across roles and projects. That shift is forcing engineering leaders to treat AI tool budgets as something that needs structure, not just enthusiasm.

Finance teams are also asking harder questions now. Not about whether AI is useful, but whether the spending is controlled, justified, and connected to actual work that matters.

Why AI Tool Spending Is Becoming a Leadership Issue

A few years ago, tooling decisions mostly stayed within teams. A team picked a code assistant or testing tool and moved on, and the cost was predictable enough that leaders rarely looked beyond procurement and seat approvals.

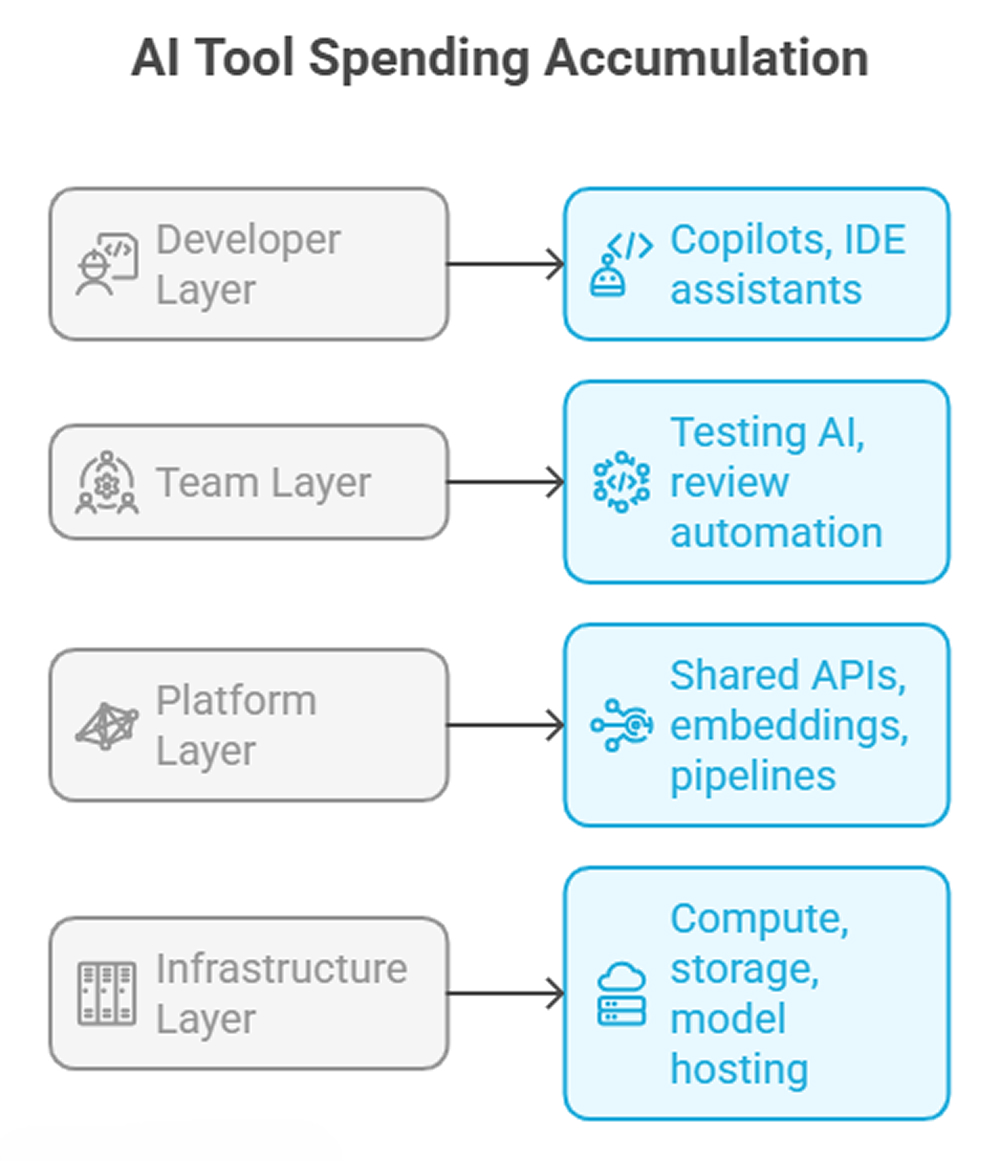

That is no longer the case. Today, engineering AI tools span multiple layers of the stack, from developer copilots to internal inference pipelines and agent-based workflows, with costs coming from licenses, API usage, infrastructure, and duplicated effort across teams. What used to be a minor tooling discussion is now an organizational issue, with budget decisions involving architecture, security, and management.

Leaders now deal with questions like:

- Who owns the cost of shared AI platforms?

- Why are multiple teams paying for similar tools?

- Does usage actually translate into output?

Without clear answers, tooling spend starts to drift. Once that drift sets in, it becomes difficult to tell whether the problem is genuine growth or just poor coordination.

Simple view of where costs accumulate

Each layer looks reasonable on its own. However, when combined, they lead to fragmentation. The difficult part is that nobody sees the whole picture unless someone deliberately puts it together.

What Should Actually Count Toward AI Tool Budgets

One of the early mistakes teams make is treating AI spend as only license cost. That underestimates the real footprint, especially when internal usage and support work grow quietly in the background.

A practical breakdown usually includes:

- Copilots and code assistants

- AI testing and QA tools

- Agent platforms and workflow automation tools

- Internal AI infrastructure (embeddings, vector DBs, orchestration)

- Experimentation budgets for teams

The last one is often ignored. But in reality, a lot of spending happens through small experiments that never get formal approval, then stick around long enough to become part of normal delivery work.

The Biggest Risks in AI Tool Cost Management

Most cost issues do not stem from a single bad decision. They come from accumulation, small approvals, and reasonable local choices that never get reviewed together.

Some common patterns show up repeatedly:

- Overlapping vendors are solving the same problems.

- Licenses are assigned but rarely used.

- API usage is growing without visibility.

- Teams are building internal tools that duplicate external tools.

- Productivity gains are assumed but not measured.

None of these is surprising. They are natural in fast-moving environments, especially when teams are under pressure to move quickly and show experimentation.

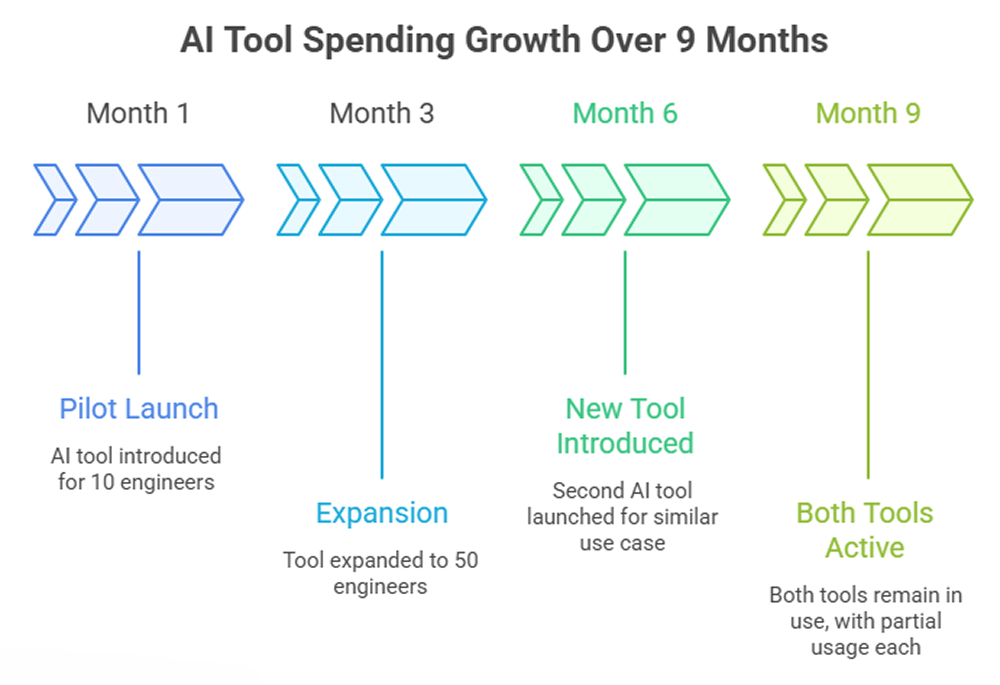

Example of hidden cost growth

Individually, each step feels reasonable. Over time, the cost doubles without a corresponding increase in value. In some cases, teams end up splitting habits across two tools, which weakens both adoption and evaluation.

This is where AI tool cost management shifts from cost-cutting to visibility. Leaders need a way to see which tools are genuinely becoming part of engineering work and which ones are just surviving through inertia.

How Engineering Leaders Should Evaluate New AI Developer Tools

Most teams evaluate tools based on demos and initial output quality. That works for short-term decisions, not for budget decisions, because early impressions usually hide the long-term friction. A more grounded evaluation looks at how the tool behaves after adoption. It asks what happens once the novelty wears off and the tool must support real work in normal team conditions.

A few things tend to matter more than expected:

- Adoption depth: Are engineers actually relying on it or just trying it occasionally?

- Workflow fit: Does it reduce steps or add new ones?

- Integration friction: How much setup and maintenance is required?

- Security constraints: Especially for internal code and data

- Total cost of ownership: Licenses plus usage plus operational overhead

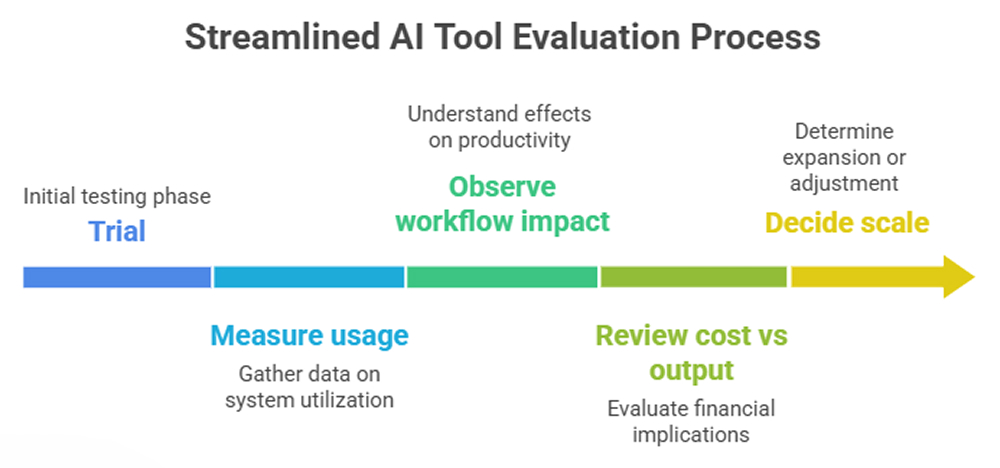

Simple evaluation flow

Skipping the middle steps usually leads to over-commitment. Teams either buy too early because the demo looked strong, or reject something useful because they never gave it enough time in the right context.

In practice, some teams also tie evaluations to existing metrics such as cycle time or defect rate. It’s not perfect, but it is enough to avoid guesswork. The goal is not scientific certainty. It is a decision process that can survive scrutiny when budgets get reviewed later.

When leaders assess AI developer tools, they also need to distinguish between convenience and durable value. Something that saves a few minutes occasionally is not the same as something that changes the shape of delivery work week after week.

Building a Smarter Budgeting Model for Engineering AI Tools

A fixed annual budget does not work well for AI tooling. Usage is variable, new tools appear constantly, and teams experiment. The spending pattern looks more like cloud usage mixed with SaaS procurement than a stable software category.

A more flexible model tends to work better. It gives leaders room to support experimentation without pretending that every dollar can be forecasted in advance.

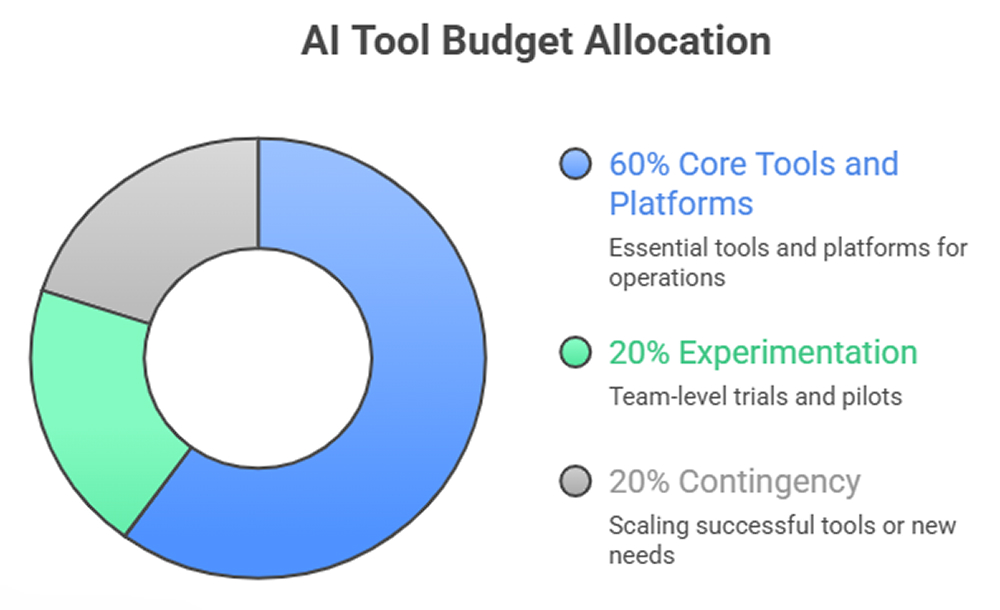

One approach that shows up in mature teams looks like this:

- Establish baseline platform allocation for shared systems.

- Define a controlled experimentation budget for teams.

- Tie periodic review cycles to actual usage.

Example budgeting structure

This avoids locking the organization into early decisions while still preventing uncontrolled growth. It also creates a healthier conversation with finance because the unpredictable part of the budget is named upfront instead of appearing later as a surprise.

Some teams also introduce lightweight approval gates, not for every tool, but for scaling decisions beyond a certain threshold. That threshold might be tied to headcount, contract size, or expected monthly usage, depending on how the organization buys software.

Where Budget Ownership Should Sit

Ownership is often unclear, which creates gaps. The cost is real, but the decision rights are usually split across engineering managers, platform teams, procurement, and finance.

If budgets sit entirely with engineering, platform-level costs can be overlooked. If they sit centrally, teams may feel blocked. Both models create friction for different reasons, and both can hide important tradeoffs.

A mixed model tends to work better:

- The platform team owns shared infrastructure and APIs.

- Engineering leadership owns team-level tooling.

- Finance or central ops provides visibility and guardrails.

This keeps decisions close to usage while still maintaining oversight. It also makes it easier to determine who is responsible when a tool is underused, overlaps, or suddenly becomes much more expensive than expected.

Tracking Impact Without Overcomplicating It

Measuring ROI on AI tooling is still messy. But ignoring it is worse. Most organizations do not need a perfect model on day one, but they do need something better than intuition. This is also where tools like Milestone help by giving leaders visibility into adoption, workflow impact, and how GenAI tools actually affect engineering output.

Most teams start with simple signals:

- Changes in cycle time

- Reduction in repetitive tasks

- Output per engineer (rough, but directional)

- Adoption rates over time

These are not perfect metrics. But they help identify whether a tool is actually used and whether it changes how work gets done. Even rough measures become useful when they are reviewed consistently instead of only during annual planning.

Trying to build a perfect measurement system early usually slows everything down. Teams end up arguing over methodology rather than learning whether the tool deserves broader adoption. A practical approach is to start rough, then refine. The important thing is to keep the measurement close to real engineering work rather than forcing teams into a reporting exercise they do not trust.

Final Thoughts

AI adoption is not slowing down. The challenge is not whether to invest, but how to keep those investments grounded in actual engineering priorities instead of momentum and internal hype.

Strong budget discipline does not block innovation. It makes sure the tools teams rely on are actually worth keeping, scaling, and supporting over time.

FAQs

1. How much should engineering teams budget for AI tools in 2026?

There is no fixed percentage. Most teams allocate a baseline for core tools and reserve additional budget for experiments and scaling. The stronger approach is to define spending lanes clearly rather than picking a number that looks neat in a planning sheet. That usually gives leaders more room to adjust spending as usage patterns become clearer.

2. What factors should leaders weigh when approving new AI tools?

Adoption potential, workflow fit, integration effort, security impact, and total cost over time. Initial output quality alone is not enough for long-term decisions, especially when the tool will be used inside everyday engineering workflows. A tool that looks impressive in a trial can still fail if teams do not use it consistently.

3. How can engineering leaders measure ROI on AI tooling budgets?

By tracking usage, workflow changes, and directional productivity signals like cycle time or reduced manual effort. Exact ROI is hard to calculate, but trends are usually visible if teams review them over time instead of expecting immediate proof. The goal is usually to spot meaningful patterns, not to produce a perfect financial formula.

4. Should AI tool budgets sit with engineering, product, or a central platform team?

A shared model works best. Platform teams handle infrastructure, engineering owns team tools, and central teams provide oversight. That split usually reflects how the cost actually behaves in practice. It also makes accountability clearer when spending starts to rise or an overlap appears across teams.

5. How can teams avoid wasted spend on overlapping or underused AI tools?

Regular portfolio reviews, clear ownership, and visibility into usage help reduce duplication and unused licenses. The longer teams wait to review the portfolio, the more likely it is that temporary experiments will turn into silent, recurring costs. Even a lightweight review process can prevent small tool decisions from becoming long-term budget drag.