Generative AI has moved beyond the experimentation phase. It now shows up in code assistants, support workflows, internal search, test generation, documentation, and product features. For engineering leaders, that shift changes the conversation. The question is no longer whether teams will use these systems, but how to scale them safely without creating hidden risk across security, compliance, cost, and operations.

This is where AI governance becomes practical rather than theoretical. In an engineering setting, governance is not a policy deck that sits untouched in a shared folder. It is the working system of decision-making, controls, ownership, and review processes that enables teams to adopt GenAI safely at speed. A good approach provides developers with clarity rather than friction, and leaders with visibility rather than surprises.

The challenge is that GenAI spreads quickly. One team starts with a coding assistant. Another adds an internal chatbot. A platform group integrates model APIs into a service. A product team ships AI features to customers. Soon, the organization may have multiple vendors, multiple prompts, multiple data flows, and very little consistency. Without structure, scale starts to work against you.

What AI Governance Means for Engineering Leaders

For engineering leaders, governance should be treated as an operational layer. It sits between experimentation and production, and it answers a few basic questions:

- What tools are approved?

- What data can be used?

- Who owns model behavior in production?

- What gets logged, reviewed, and monitored?

- How do incidents get escalated?

In simple terms, governance is the mix of policies, technical controls, and accountability required to use GenAI responsibly. It matters because these systems do not behave like traditional software. A model can return different answers to similar prompts. It can expose sensitive data if integrated poorly. It can cause cost spikes due to untracked usage. It can also fail in ways that are harder to predict than ordinary application bugs.

Engineering leaders do not need a perfect framework on day one. They need a usable one. The goal is to create enough structure so that teams can move with confidence while the organization keeps control over risk, quality, and cost.

Why GenAI at Scale Creates a Governance Challenge

Small pilots are easy to tolerate because their blast radius is limited. Production adoption is different. Once GenAI becomes part of delivery pipelines or customer-facing systems, complexity grows faster than most teams expect.

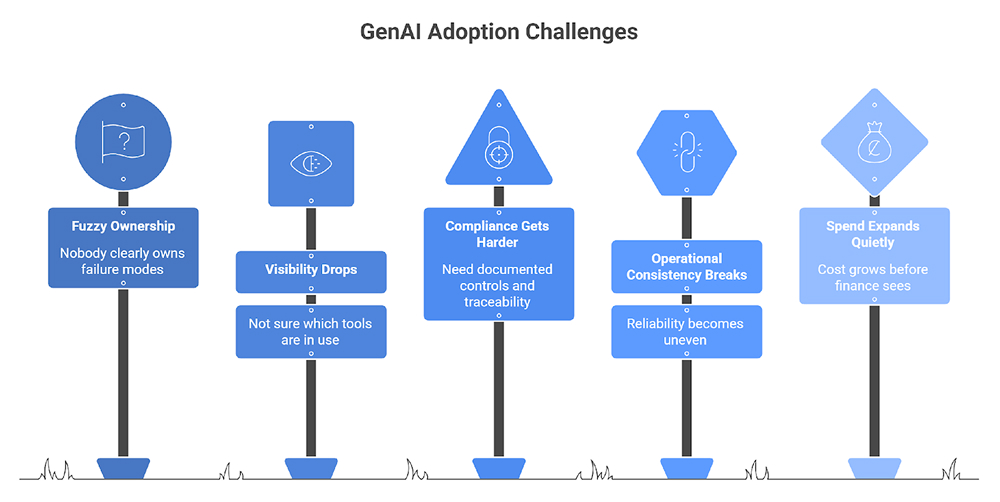

A few pressure points tend to show up early:

- Ownership becomes fuzzy: Product teams may own the user experience, platform teams may own integrations, security may own reviews, and nobody may clearly own failure modes.

- Visibility drops: Teams often know which applications they deployed, but not which prompts, models, or external tools are actually in use across the stack.

- Compliance gets harder: The moment customer data, internal documents, or regulated workflows come into play, leaders need documented controls and traceability.

- Operational consistency breaks: Different teams pick different vendors, use different evaluation methods, and follow different fallback patterns, which makes reliability uneven.

- Spend expands quietly: Token consumption, model retries, and tool sprawl can drive cost long before finance sees the full picture.

This is why governance has to grow with adoption. It is not a brake on engineering work. It is what prevents scattered AI usage from turning into an unmanaged platform problem.

How to Build an AI Governance Framework That Supports Speed

The most effective AI governance framework is one that standardizes the right things and leaves room for teams to build. It should reduce duplicate decision-making and make risk review predictable. In practice, that means defining a few non-negotiable controls, then enabling teams with shared patterns and approved tooling.

Start with ownership. Every AI-enabled system should have a clearly named owner, even if several teams contribute to it. That owner does not need to personally review every prompt or metric, but they do need to be responsible for operational quality, incident response, and policy compliance. Without this, governance remains everyone’s job and nobody’s responsibility.

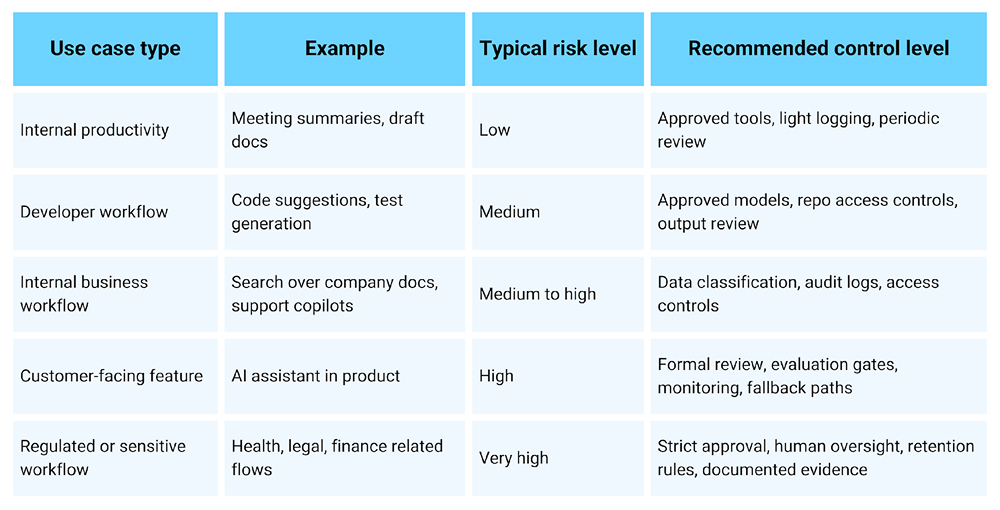

Next, classify use cases by risk. Not every GenAI workflow deserves the same level of review. An internal summarization tool is not the same as a customer-facing recommendation engine or an assistant that handles sensitive records. A risk-based model helps teams move faster because review effort matches impact.

Here is a simple way to think about it:

After classification, define the minimum controls that apply across all teams. Keep this list short and clear. If the baseline becomes too heavy, teams will work around it. A strong starting set usually includes:

- Approved vendors and model providers

- Data handling rules for prompts, outputs, and logs

- Access controls for APIs, tools, and embeddings

- Logging standards for prompts, responses, model versions, and cost

- Human review requirements for high-risk use cases

- Escalation steps for quality or security incidents

From there, give teams reusable building blocks. This is where governance starts to help speed rather than slow it. Instead of asking every team to invent its own process, platform, and security leaders can provide standard patterns such as a vetted model gateway, shared observability, approved prompt libraries, secure retrieval architecture, and evaluation pipelines. Standardization reduces both confusion and review time.

Another important step is to separate experimentation from production. Teams should be able to test ideas quickly in sandboxed environments, but promotion into production should require a short, repeatable checklist. That checklist might cover data sources, model choice, fallback behavior, evaluation results, user impact, and security review. When the path is predictable, teams stop seeing governance as a blocker.

Engineering leaders should also create feedback loops. Early governance often focuses too much on approval and too little on learning. In reality, production systems change. Models change. User behavior changes. So governance should include a process for reviewing incidents, refining controls, retiring unsafe patterns, and updating approved tooling over time.

The Main AI Governance Risks to Address Early

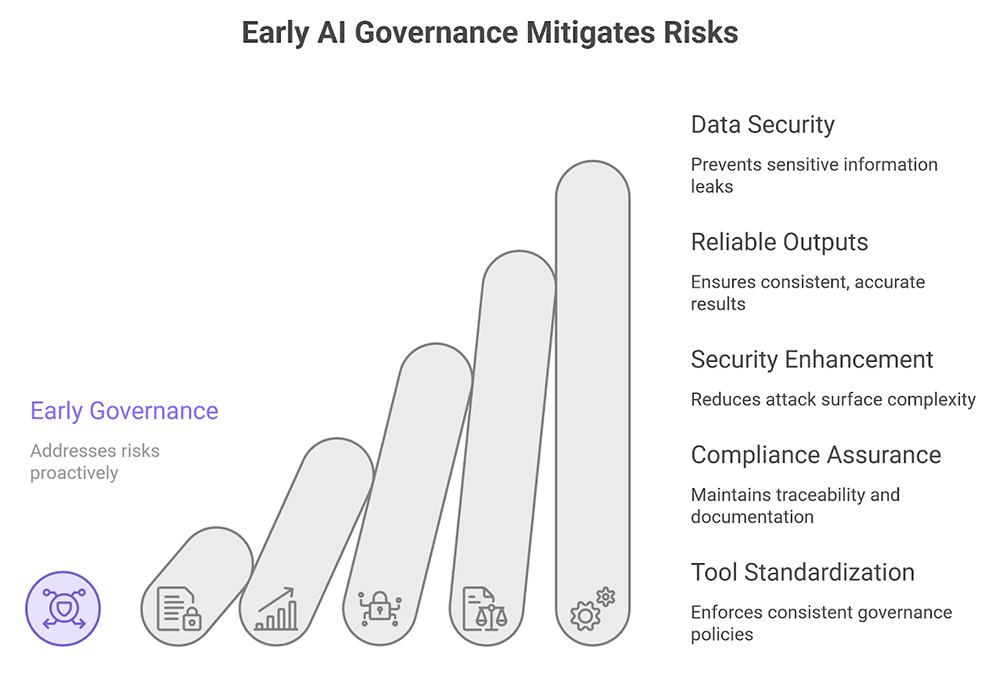

The most common failures are rarely dramatic on day one. They usually start as small gaps that compound over time. That is why engineering leaders should address key AI governance risks early, before GenAI becomes deeply embedded across the organization.

1. Data Leakage

Prompts can include source code, customer records, product plans, or internal knowledge. If teams use external tools without clear controls, sensitive information can end up in places it should never go. Strong input policies, approved vendors, and clear retention rules matter here.

2. Unreliable Outputs

Models can hallucinate, omit important context, or produce inconsistent results. In engineering workflows, those failures can affect code quality, debugging decisions, support accuracy, or customer trust. High-risk systems need evaluations, guardrails, and fallbacks rather than blind trust.

3. Security Issues

Security risks have become more complex. Prompt injection, insecure tool access, over-permissioned agents, and vulnerable integrations can all expand the attack surface. The risk is not just the model itself. It is the entire chain of retrieval, tools, APIs, and external dependencies.

4. Compliance Gaps

Compliance gaps appear when teams move faster than documentation can keep up. Leaders may know a system exists, but not whether it was reviewed, what data it touches, or how decisions are logged. In regulated environments, that lack of traceability becomes a serious problem.

5. Tool Sprawl

When every team chooses different assistants, plugins, monitoring tools, and model providers, governance becomes nearly impossible to enforce consistently. Standardization does not remove flexibility. It protects the organization from fragmentation.

AI governance monitoring in production

Governance is incomplete without runtime visibility. Once systems are live, leaders need AI governance monitoring that goes beyond uptime. Traditional monitoring tells you whether a service is available. AI-specific monitoring tells you whether it is still behaving acceptably.

A practical monitoring program should track:

- Input and output anomalies

- Prompt and response logging with proper redaction

- Model version changes and performance drift

- Tool invocation patterns for agentic systems

- Cost by service, team, and use case

- Escalations tied to unsafe or low-quality behavior

This does not mean collecting everything forever. It means capturing enough structured evidence to understand what the system did, what changed, and where risks are building. For engineering leaders, that visibility turns governance from a policy exercise into an operational discipline.

Conclusion

GenAI creates real leverage for engineering teams, but scale without control is expensive. It creates hidden exposure, inconsistent delivery, and difficult incident response. The answer is not to slow down experimentation. It is to create a clear operating model that lets teams move fast inside well-defined boundaries.

Strong governance helps leaders standardize tools, clarify ownership, manage risk, and build trust in production systems. In other words, it provides the organization with the structure needed to scale GenAI responsibly. That is the point. Not less innovation, but better control over how innovation reaches production.

FAQs

1. What is AI governance for GenAI in engineering teams?

It is the set of rules, responsibilities, and technical controls that guide how engineering teams use GenAI systems. That includes approved tools, data handling rules, monitoring, review steps, and ownership. The purpose is to scale usage safely while maintaining quality, security, and accountability.

2. Why do engineering leaders need AI governance when scaling GenAI?

As the use of Generative AI expands, teams begin to incorporate additional models, vendors, prompts, and integrations. All of these new additions create more workflows. Without structure and governance, engineering leaders lack control over the risk, cost, and quality. Governance helps teams move faster while reducing security exposure and maintaining operational consistency.

3. What AI policies should engineering teams put in place first?

Start with policies for approved tools, sensitive data handling, logging, access control, and review requirements for higher-risk use cases. These policies should be short, specific, and easy to follow. The goal is not policy volume. The goal is to give teams clear boundaries they can actually use.

4. How can leaders monitor GenAI risks in production systems?

They should track output quality, unusual prompt patterns, model drift, tool usage, cost growth, and incident trends. Logging must be structured and privacy-aware. Monitoring should also connect to escalation workflows so teams can act quickly when quality, security, or compliance issues arise.

5. Who should own AI governance in an engineering organization?

Ownership should be shared, but not vague. Platform, security, legal, and product stakeholders all contribute, yet each AI-enabled system still needs a named engineering owner. That person or team is responsible for production behavior, compliance with standards, and coordinating response when issues surface.